Are you over 18 and want to see adult content?

More Annotations

A complete backup of https://sengakuji.or.jp

Are you over 18 and want to see adult content?

A complete backup of https://3forks.com

Are you over 18 and want to see adult content?

A complete backup of https://holidayworld.cz

Are you over 18 and want to see adult content?

A complete backup of https://seemaengineers.com

Are you over 18 and want to see adult content?

A complete backup of https://fairtradeamerica.org

Are you over 18 and want to see adult content?

A complete backup of https://minib.cz

Are you over 18 and want to see adult content?

A complete backup of https://pondinformer.com

Are you over 18 and want to see adult content?

A complete backup of https://ktwu.org

Are you over 18 and want to see adult content?

A complete backup of https://speedreport.de

Are you over 18 and want to see adult content?

A complete backup of https://broekhuis-autos.nl

Are you over 18 and want to see adult content?

A complete backup of https://fightcps.com

Are you over 18 and want to see adult content?

A complete backup of https://aig.org.au

Are you over 18 and want to see adult content?

Favourite Annotations

A complete backup of impactledscreens.com

Are you over 18 and want to see adult content?

A complete backup of barcelona-budget.net

Are you over 18 and want to see adult content?

A complete backup of simpleregistry.com

Are you over 18 and want to see adult content?

A complete backup of thesteakager.com

Are you over 18 and want to see adult content?

A complete backup of blogdetoledo2011.blogspot.com

Are you over 18 and want to see adult content?

A complete backup of pioneerlibrarysystem.org

Are you over 18 and want to see adult content?

Text

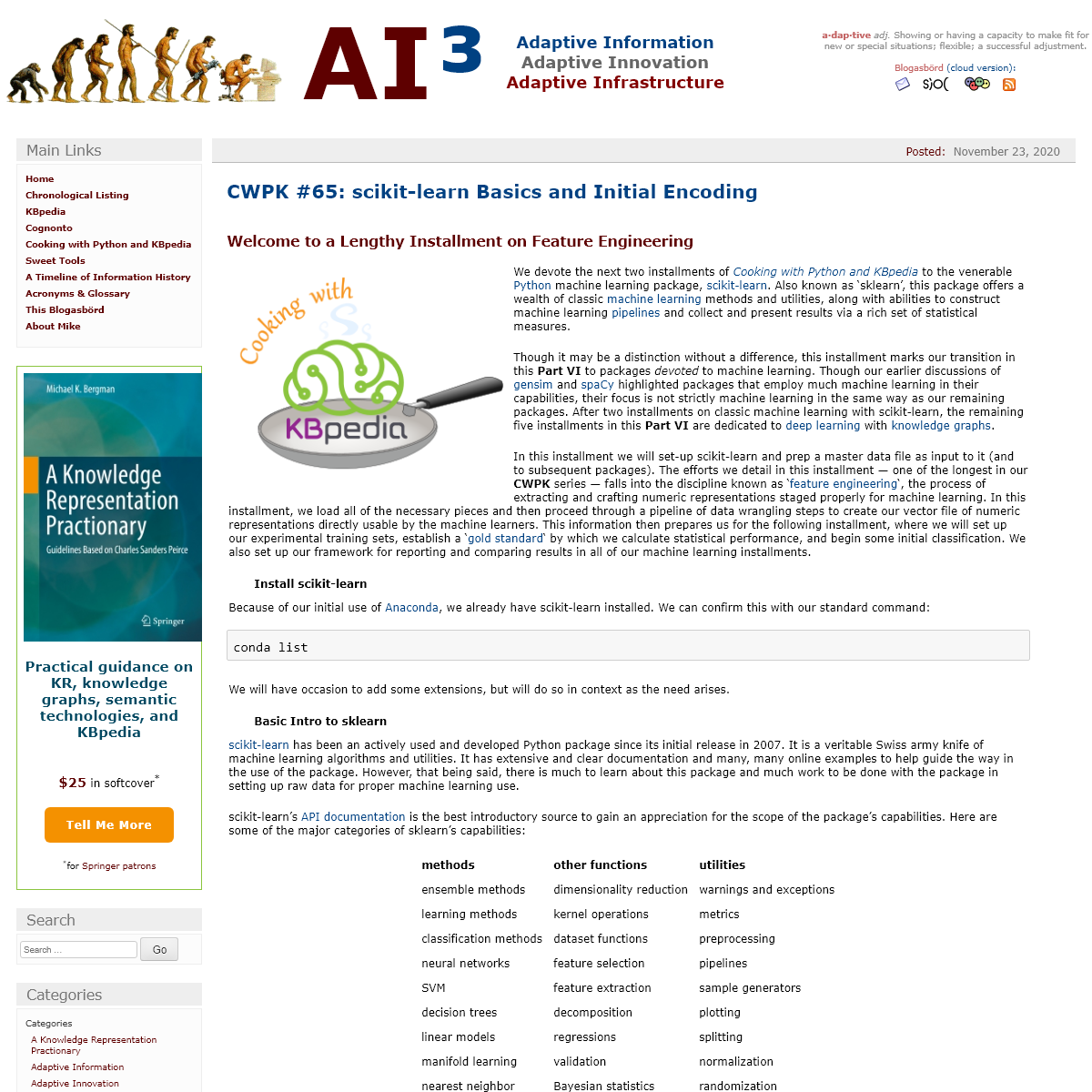

AI³

ADAPTIVE INFORMATIONADAPTIVE INNOVATION

ADAPTIVE INFRASTRUCTURE A·DAP·TIVE _adj._ Showing or having a capacity to make fit for new or special situations; flexible; a successful adjustment. Blogasbörd (cloud version): POSTED:NOVEMBER 23, 2020 CWPK #65: SCIKIT-LEARN BASICS AND INITIAL ENCODING WELCOME TO A LENGTHY INSTALLMENT ON FEATURE ENGINEERING We devote the next two installments of _Cooking with Python andKBpedia_

to the venerable Python machine learning package, scikit-learn. Also known as

‘sklearn’, this package offers a wealth of classic machine learning methods and utilities, along with abilities to construct machine learningpipelines and

collect and present results via a rich set of statistical measures. Though it may be a distinction without a difference, this installment marks our transition in this PART VI to packages _devoted_ to machine learning. Though our earlier discussions of gensimand spaCy

highlighted packages that employ much machine learning in their capabilities, their focus is not strictly machine learning in the same way as our remaining packages. After two installments on classic machine learning with scikit-learn, the remaining five installments in this PART VI are dedicated to deep learning with knowledgegraphs .

In this installment we will set-up scikit-learn and prep a master data file as input to it (and to subsequent packages). The efforts we detail in this installment — one of the longest in our CWPK series — falls into the discipline known as ‘feature engineering‘, the process of

extracting and crafting numeric representations staged properly for machine learning. In this installment, we load all of the necessary pieces and then proceed through a pipeline of data wrangling steps to create our vector file of numeric representations directly usable by the machine learners. This information then prepares us for the following installment, where we will set up our experimental training sets, establish a ‘gold standard‘ by which

we calculate statistical performance, and begin some initial classification. We also set up our framework for reporting and comparing results in all of our machine learning installments. INSTALL SCIKIT-LEARN Because of our initial use of Anaconda, we

already have scikit-learn installed. We can confirm this with ourstandard command:

conda list

We will have occasion to add some extensions, but will do so in context as the need arises. BASIC INTRO TO SKLEARN scikit-learn has been an actively used and developed Python package since its initial release in 2007. It is a veritable Swiss army knife of machine learning algorithms and utilities. It has extensive and clear documentation and many, many online examples to help guide the way in the use of the package. However, that being said, there is much to learn about this package and much work to be done with the package in setting up raw data for proper machine learning use. scikit-learn’s API documentationis the best

introductory source to gain an appreciation for the scope of the package’s capabilities. Here are some of the major categories of sklearn’s capabilities:METHODS

OTHER FUNCTIONS

UTILITIES

ensemble methods

dimensionality reduction warnings and exceptionslearning methods

kernel operations

metrics

classification methodsdataset functions

preprocessing

neural networks

feature selection

pipelines

SVM

feature extraction

sample generators

decision trees

decomposition

plotting

linear models

regressions

splitting

manifold learning

validation

normalization

nearest neighbor

Bayesian statistics

randomization

sklearn offers a diversity of supervised and unsupervised learning methods, as well as dataset transformations and data loading routines. There are about a dozen supervised methods in the package, and nearly ten unsupervised methods. Multiple utilities exist for transferring datasets to other Python data science packages, including the pandas, gensim and

PyTorch ones used in CWPK. Other prominent data formats readable by sklearn include SciPy and its binary formats, NumPyarrays, the libSVM

sparse format, and common formats including CSV, Excel

, JSON

and SQL

. sklearn provides converters for translating string and categorial formats into numeric representations usable by the learning methods.A user guide

provides

examples in how to use most of these capabilities, and relatedprojects list

dozens of other Python packages that work closely with sklearn. Online searches turn up tens to hundreds of examples of how to work with the package. We only touch upon a few of scikit-learn’s capabilities in our series. But, clearly, the package’s scope warrants having it as an essential part of your data scienct toolkit. PREPPING THE TEXT DATA In earlier installments we introduced the three main portions of KBpedia data in STRUCTURE, ANNOTATIONS and PAGES that can contribute features to our machine learning efforts. What we now want to do is to consolidate these parts into a single master that may form the reference source for our learning efforts moving forward. Mixing and matching various parts of this master data will enable us to generate a variety of dataset configurations that we may test and compare. Overall, there are about 15 steps in this process of creating a master input file. This is one of the most time-consuming tasks in the entire CWPK effort. There is a good reason why data preparation is given such prominent recognition in most discussions of machine learning. However, it is also the case that stepwise examples about how _EXACTLY_ to conduct such preparations is not well documented. As a result, we try to provide more than the standard details below. Here are the major steps we undertake to prepare our KBpedia data formachine learning:

1. ASSEMBLE CONTRIBUTING DATA PARTS To refresh our memories, here are the three key input files to our master data compilation:* STRUCTURE –

C:/1-PythonProjects/kbpedia/v300/extractions/data/graph_specs.csv* ANNOTATIONS –

C:/1-PythonProjects/kbpedia/v300/extractions/classes/Generals_annot_out.csv* PAGES –

C:/1-PythonProjects/kbpedia/v300/models/inputs/wikipedia-trigram.txt. We can inspect these three input files in turn, in the order listed (correct for your own file references): import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/extractions/data/graph_specs.csv')df

import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/extractions/classes/Generals_annot_out.csv')df

In the case of the PAGES file we need to convert its .txt extension to .csv, add a header row of id, text as its first row, and remove any commas from its ID field. (These steps were not needed for the previous two files since they were already in pandas format.) import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/wikipedia-trigram.csv')df

2. MAP FILES

We inspect each of the three files and create a master lookup table for what each includes (not shown). We see that only the ANNOTATIONS file has the correct number of reference concepts. It also has the largest number of fields (columns). As a result, we will use that file as our target for incorporating the information in the other two files. (By the way, entries marked with ‘NaN’ are empty.) We will use our IDs (called ‘source’ in the STRUCTURE file) as the index key for matching the extended information. Prior to mapping, we note that the ANNOTATIONS file does not have the updated underscores in place of the initial hyphens (see CWPK #48),

so we load up that file, and manually make that change to the id, superclass, and subclass fields. We also delete two of the fields in the ANNOTATIONS file that provide no use to our learning efforts, editorialNote and isDefinedBy. After these changes, we name the file C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master.csv. This is the master file we will use for consolidating our input information for all learning efforts moving forward. NOTE: During development of these routines I typically use temporary master files for saving each interim step, which provides the opportunity to inspect each transitional step before moving forward. I only provide the concluding versions of these steps in C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master.csv, which is listed on GitHub under the https://github.com/Cognonto/CWPK/tree/master/sandbox/models/inputs directory, along with many of the other inputs noted below. If you want to inspect interim versions as outlined in the steps below, you will need to reconstruct the steps locally. 3. PREPARE STRUCTURE FILE In the STRUCTURE file, only two fields occur that are not already in the ANNOTATIONS file, namely the count of direct subclass children (‘weight‘) and the supertype (ST). The ST field is a many-to-one, which means we need to loop over that field and combine instances into a single cell. We will look to CWPK #44for some

example looping code by iterating instances, only now using the standard ',' separator in place of the double pipes '||' used earlier. Since we need to remove the ‘kko: prefix from the STRUCTURE file (to correspond to the convention in the ANNOTATIONS file), we make a copy of the file and rename and save it to C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_struct_temp.csv. Since we now have an altered file, we can also remove the target column and rename source → id and weight → count. With these changes we then pull in our earlier code and create the routine that will put all of the SuperTypes for a given reference concept (RC) on the same line, separated by the standard ',' separator. We will namethe new output file

C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_structure.csv. Here is the code (note the input file is sorted on id):import csv

in_file = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_struct_temp.csv' out_file = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_structure.csv' with open(in_file, 'r', encoding='utf8') as input: reader = csv.DictReader(input, delimiter=',', fieldnames=)header =

with open(out_file, mode='w', encoding='utf8', newline='') as output: csv_out = csv.writer(output)x_st = ''

x_id = ''

row_out = ()

flg = 0

for row in reader:r_id = row

r_cnt = row

r_st = row

if r_id != x_id: #Note 1 csv_out.writerow(row_out) x_id = r_id #Note 1x_cnt = r_cnt

x_st = r_st

flg = 1

row_out = (x_id, x_cnt, x_st) elif r_id == x_id: x_st = x_st + ',' + r_st #Note 2flg = flg + 1

row_out = (x_id, x_cnt, x_st)output.close()

input.close()

print('KBpedia SuperType flattening is complete . . .') This routine does produce an extra line at the top of the file that needs to be removed manually (at least the code here does not handle it). This basic routine looks to find the change in name for the reference concept ID (1) that signals a new series is occurring. The SuperTypes encountered that share the same RC but have different names, are added to a single list string (2). To see the resulting file, here’s the statement: import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_structure.csv')df

4. PREPARE PAGES FILE We are dealing with files here that strain the capabilities of standard spreadsheets. Large files become hard to load and sluggish (like minutes of processing with LibreOffice) even when they do. I have needed to find some alternative file editors to handle largefiles.

NOTE: I have tried numerous large file editors that I discuss further in the CWPK #75 conclusion to this series, but at present I am using the free ‘hackable’ Atom editor. Atom is a highly configurable editor from GitHub suitable to large files. It has a rich ecosystem of ‘packages’ that provide specific functionality, including one (tablr ) that provides CSV table editing and manipulation. If Atom proves inadequate which would likely force me to purchase an alternative, my current evaluations point to EmEditor , which also has a limited free option and a 30-day trial of the paid version (\$40 first year, \$20 yearly thereafter). Finally, in order to use Atom properly on CSV files it turns out I also needed to fix a known tablr JS issue.

Because we want to convert our PAGES file to a single vector representation by RC (the same listing as in the ANNOTATIONS file), we will wait on finishing preparing this file for the moment. We return to this question below. 5. CREATE MERGE ROUTINE OK, so we now have properly formatted files for incorporation into our new master. Our files have reached a size where combining or joining them should be done programmatically, not via spreadsheet utilities. pandas offers a number of join and merge routines,

some based on row concatenations,

others based on joins such as inner or outerpatterned

on SQL. In our case, we want to do what is called a ‘left join’ wherein the left-specified file retains all of its rows, while the right file matches where it can. Clearly, there are many needs and ways for merging files, but here is one example for a ‘left join’: import pandas as pd file_a = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_1.csv' file_b = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_structure.csv' file_out = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_2.csv' df_a = pd.read_csv(file_a) df_b = pd.read_csv(file_b) merged_inner = pd.merge(left=df_a, right=df_b, left_on='id', right_on='id') # Since both sources share the 'id' column name, we could skip the 'left_on' and 'right_on' parameters # What's the size of the output data?merged_inner.shape

merged_inner

merged_inner.to_csv(file_out) This works great, too. This is the pattern we will use for other merges. We have the green light to continue with our datapreparations.

6, FIX COLUMNS IN MASTER FILE We now return our attention to the master file. There are many small steps that must be taken to get the file into shape for encoding it for use by the scikit-learn machine learners. Preferably, of course, these steps get combined (once they are developed and tested) into an overall processing pipeline. We will discuss pipelines in later installments. But, for now, in order to understand many of the particulars involved, we will proceed baby step by baby step through these preparations. This does mean, however, that we need to wrap each of these steps into the standard routines of opening and closing files and identifying columns with the active data. Generally, at the top of each baby step routine, all of which use pandas, we open the master from the prior step and then save the results under a new master name. This enables us to run the routine multiple times as we work out the kinks and to return to prior versions should we later discover processing issues: import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master.csv') out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_1.csv'df

We can always invoke some of the standard pandas calls to see what the current version of the master file contains:df.info()

cols = list(df.columns.values)print(cols)

We can also manipulate, drop, or copy columns or change their displayorder:

df.drop('CleanDef', axis=1, inplace=True)df = df

df.to_csv(out_f)

# Copies contents in 'SuperType' column to a new column location, 'pSuperType'df.loc = df

df.to_csv(out_f)

We will first focus on the ‘definitions’ column in the master file. In addition to duplicating input files under an alternative output name, we also will move any changes in column data to new columns. This makes it easier to see changes and overwrites and to recover prior data. Again, once the routines are running correctly, it is possible to collapse this overkill into pipelines. One of our earlier preparatory steps was to ensure that the Cyc hrefs first mentioned in CWPK #48 were removed from certain KBpedia definitions that had been obtained from OpenCyc. As before, we use the amazing BeautifulSoup HTML parser: # Removal of Cyc hrefs from bs4 import BeautifulSoupcleandef =

for column in df: columnContent = df for row in columnContent:line = str(row)

soup = BeautifulSoup(line) tags = soup.select("a")if tags != :

for item in tags:item.unwrap()

item_text = soup.get_text()else:

item_text = line

cleandef.append(item_text)df = cleandef

df.to_csv(out_f)

print('File written and closed.') This example also shows us how to loop over a pandas column. The routine finds the matching href, picks out the open and closing tags, and retains the link label while it removes the link HTML. In reviewing our data, we also observe that we have some string quoting issues and a few instances of ‘smart quotes’ embedded within our definitions: # Forcing quotes around all definitionscleandef =

quote = '"'

for column in df: columnContent = df for row in columnContent:line = str(row)

if line != quote: line = quote + line + quote line = line.replace('"""', '"') # elif line != quote: # line = line + quoteelse:

continue

cleandef.append(line)df = cleandef

df.drop('Unnamed: 0', axis=1, inplace=True) df.drop('CleanDef', axis=1, inplace=True)df.to_csv(out_f)

print('File written and closed.') We also need to ‘normalize’ the definitions text by converting it to lower case, removing punctuation and stop words, and other refinements. There are many useful text processing techniques in the following code block: # Normalize the definitions from gensim.parsing.preprocessing import remove_stopwords from string import punctuation from gensim import utils import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_1.csv') out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_3.csv'more_stops =

def is_digit(word):try:

int(word)

return True

except ValueError:

return False

tokendef =

quote = '"'

for column in df: columnContent = dfi = 0

for row in columnContent:line = str(row)

try:

# Lowercase the text line = line.lower() # Remove punctuation line = line.translate(str.maketrans('', '', string.punctuation)) # More preliminary cleanup line = line.replace("‘", "").replace("’", "").replace('-', ' ').replace('–', ' ').replace('↑', '') # Remove stopwords line = remove_stopwords(line) splitwords = line.split()goodwords =

line = ' '.join(goodwords) # Remove number strings (but not alphanumerics)new_line =

for word in line.split(): if not is_digit(word): new_line.append(word) line = ' '.join(new_line) # print(line) except Exception as e: print ('Exception error: ' + str(e)) tokendef.append(line)i = i + 1

df = tokendef

df.drop('Unnamed: 0', axis=1, inplace=True) df.drop('definition', axis=1, inplace=True)df.to_csv(out_f)

print('Normalization applied to ' + str(i) + ' texts') print('File written and closed.') To be consistent with our PAGE processing steps, we also will extract bigrams from the definition text. We had earlier worked out this routine (see CWPK #63)

that we generalize here. This also provides the core routines for preprocessing input text for evaluations: # Phrase extract bigrams, from CWPK #63 from gensim.models.phrases import Phraser, Phrases import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_definition_short.csv') out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_definition_bigram.csv'definition =

for column in df: columnContent = dfi = 0

for row in columnContent:line = str(row)

try:

splitwords = line.split()common_terms =

ngram = Phrases(splitwords, min_count=2, threshold=10, max_vocab_size=80000000, delimiter=b'_', common_terms=common_terms) ngram = Phraser(ngram) line = list(ngram) line = ', '.join(line) line = line.replace(', ', ' ') line = line.replace(' s ', '') # print(line) except Exception as e: print ('Exception error: ' + str(e)) definition.append(line)i = i + 1

df = definition

df.drop('Unnamed: 0', axis=1, inplace=True) df.drop('definition', axis=1, inplace=True)df.to_csv(out_f)

print('Phrase extraction applied to ' + str(i) + ' texts') print('File written and closed.') Phrase extraction applied to 58069 texts File written and closed. Some of the other desired changes could be done in the open spreadsheet, so no programmatic code is provided here. We wanted to update the use of underscores in all URI identifiers, retain case, and separate multiple entries by blank space rather than commas or double pipes. We made these changes via simple find-and-replace for the subClassOf, superClassOf, and SuperType columns. (The id column is already a single token, so it was only checked for underscores.) Unlike the PAGES and definitions, which are all normalized and lowercased, we also wanted to remove punctuation, separate entries by spaces, and remove punctuation but retain case for the prefLabel and altLabel columns. Again, simple find-and-replace was used here. Thus, aside from the PAGES that we still need to merge in vector form (see below), these baby steps complete all of the text preprocessing in our master file. For these columns, we now have the inputs to the later vectorizing routines. 7. ‘PUSH DOWN’ SUPERTYPES We discussed in CWPK #56 how we wanted to evaluate the SuperType assignments for a given KBpedia reference concept (RC). Our main desire is to give each RC its most specific SuperType assignments. Some STs are general, higher-level categories that provide limited or no discriminatorypower.

We term the process of narrowing SuperType assignments to the lowest and most specific levels for a given RC a ‘push down’. The process we have defined for this first begins by pruning any mention of a more general category within an RCs current SuperType listing, unless doing so would leave the RC without an ST assignment. We supplement this approach with a second pass where we iterate one by one over some common STs and remove them unless it would leave the RC without an ST assignment. Here is that code, which we write into a new column so that we do not lose the starting listing: # Narrow SuperTypes, per #CWPK #56 import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master.csv') out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_1.csv'parent_set =

second_pass =

clean_st =

quote = '"'

for column in df: columnContent = dfi = 0

for row in columnContent:line = str(row)

try:

line = line.replace(', ', ' ') splitclass = line.split() # Remove duplicates because dict only has uniques splitclass = list(dict.fromkeys(splitclass)) line = ' '.join(splitclass)goodclass =

test_line = ' '.join(goodclass) if test_line == '': clean_st.append(line)else:

line = test_line

clean_st.append(line) except Exception as e: print ('Exception error: ' + str(e))i = i + 1

print('First pass count: ' + str(i))# Second pass

print('Starting second pass . . . .')clean2_st =

i = 0

length = len(clean_st) print('Length of clean_st: ', length) ln = len(second_pass) for row in clean_st:line = str(row)

try_line = line

for item in second_pass:word = str(item)

try_line = str(try_line) try_line = try_line.strip() try_line = try_line.replace(word, '') try_line = try_line.strip() try_line = try_line.replace(' ', ' ') char = len(try_line)if char < 6:

try_line = line

line = line

else:

line = try_line

clean2_st.append(line) print('line: ' + str(i) + ' ' + line)i = i + 1

df = clean2_st

df.drop('Unnamed: 0', axis=1, inplace=True)df = df

df.to_csv(out_f, encoding='utf-8') print('ST reduction applied to ' + str(i) + ' texts') print('File written and closed.') Depending on the number of print statements one might include, the listing above can produce a very long listing! 8. FINAL TEXT REVISIONS We have a few minor changes to attend to prior to the numeric encoding of our data. The first revision is based on the fact that a minor portion of both altLabels and definitions are much longer than the others. We analyzed min, max, mean and median for these two text sets. We roughly doubled the size of the rough mean and median for each set, and trimmed the strings to a maximum length of 150 and 300 characters, respectively. We employed the textwrap package to make the splits atword boundaries:

import pandas as pdimport textwrap

df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master.csv') out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_1.csv'texts = df

new_texts =

for line in texts:line = str(line)

length = len(line)if length == 0:

new_line = line

else:

new_line = textwrap.shorten(line, width=150, placeholder='') new_texts.append(new_line)df = new_texts

df.drop('Unnamed: 0', axis=1, inplace=True) #df.drop('def_final', axis=1, inplace=True) df.to_csv(out_f, encoding='utf-8') print('Definitions shortened.') print('File written and closed.') We repeated this routine for both text sets and also did some minor punctuation clean up for the altLabels. We have now completed all text modifications and have a clean text master. We name this file kbpedia_master_text.csv and keep it for archive. PREPARE NUMERIC ENCODINGS We now shift our gears from prepping and staging the text to encoding all information to a proper numeric form given its content. 9. PLAN THE NUMERIC ENCODING Only numbers can be represented to machine learning models. Here is one place where sklearn really shines with its utility functions. The manner by which we encode fields needs to be geared to the information content we can and want to convey, so context and scale (or its reciprocal, reduction) play prominent roles in helping decide what form of encoding works best. Text and language encodings, which are among the most challenging, can range from naive unique numeric identifiers to adjacency or transformed or learned representations for given contexts, including relations, categories, sentences, paragraphs or documents. A finite set of ‘categories’, or other fields with a discrete number of targets, can be the targets of learning representations that encompass a range of members. A sentence or document or image, for example, is often reduced to a fixed number of dimensions, sometimes ranging into the hundreds, that are represented by arrays of numbers. Initial encoding representations may be trained against a desired labeled set to adjust or transform those arrays to come into correspondence with their intended targets. Items with many dimensions can occupy sparse matrices where most or many values are zero (non-presence). To reduce the size of large arrays we may also undergo further compression or dimension reduction through techniques like principal component analysis, or PCA.

Many other clustering or dimension reduction techniques exist. The art or skill in machine learning often resides at the interface between raw input data and how it is transformed into these vectors recognizable by the computer. There are some automated ways to evaluate options and to help make parameter and model choice decisions, such as grid search.

sklearn, again, has many useful methods and tools in these general areas. I am drinking from a fire hose, and you will too if you pokemuch in this area.

A general problem area, then, is often characterized by data that is heterogeneous in terms of what it captures, corresponding, if you will, to the different fields or columns one might find in a spreadsheet. We may have some numeric values that need to be normalized, some text information ranging from single category terms or identifiers to definitions or complete texts, or complex arrays that are themselves a transformation of some underlying information. At minimum, we can say that multiple techniques may be necessary for encoding multiple different input data columns. In terms of big data, pandas is roughly the equivalent analog to the spreadsheet. A nice tutorial provides some guidance on working jointly with pandas and sklearn.

One early alternative to capture this need to apply different transformations to different input data columns was the independentsklearn-pandas

. I have

looked closely at this option and I like its syntax and approach, but scikit-learn eventually adopted its own ColumnTransformer methods, and they have become the standard and more actively developedoption.

ColumnTransformer is a sklearn method for picking individual columns from pandas data sets, especially for heterogeneous data,

and can be combined into pipelines. This design is well suited tomixed data types

,

including how to render your pipelines with HTML, and to transformmultiple columns

based on pandas inputs. There is much to learn in this area, but perhaps start with this pretty good overview and then how these techniques might be combined into pipelines withcustom transformers

.

We will provide some ColumnTransformer examples, but I will also try to explain each baby step. I’ll talk more about individual techniques, but here are the encoding options we have identified to transfer our KBpedia master file:ENCODING TYPE

APPLICABLE FIELDS

one-hot

clean_ST (also target)count vector

id, prefLabel, subClassOf, superClassOftfidf

altLabel, definitiondoc2vec

page

We will cover these in the baby steps to follow. 10. CATEGORY (ONE-HOT) ENCODECategory encoding

is to take a column listing of strings (generally, and may also be multiple columns) and then convert the category strings to a unique number. sklearn has a function called LabelEncoder for this function. Since a single column of unique numbers might imply order or hierarchy to some learners, this approach may be followed bya OneHotEncoder

where each category is given its own column with a binary match (1) or not (0) assigned to each columm depending on what categories it has. Depending on the number of categories, this column array can grow to quite a large size and pose memory issues. The category approach is definitely appropriate for a score of items at the low end, and perhaps up to 500 at the upper end. Since our active SuperType categories number about 70, we first explore this option. scikit-learn has been actively developed of late, and this initial approach has been updated with an improved OneHotEncoder that works directly with strings paired with the ColumnTransformer estimator. If you research category encoding online you might be confused about these earlier and later descriptions. Know that the same general approach applies here of assigning an array of SuperType categories to each row entry in our master data. However, as we saw before for our cleaned STs, some rows (reference concepts, or RCs) may have more than one category assigned. Though it is possible to run ColumnTransformer over multiple columns at once, sklearn produces a new output column for each input column. I was not able to find a way to convert multiple ST strings in a single column to their matching category values. I am pretty sure there is a way for experts to figure out this problem, but I was not able to do so. Fortunately, in the process of investigating these matters I encountered the pandas function of get_dummies that does one-hot encoding directly. More fortunately still there is also a pandas string function that allows multiple values to be split into individual ones, that can be applied as str.get_dummies.

Further, with a bit of other Python magic, we can take the synthetic headers derived from the unique SuperType classes and give them a st_ prefix and combine them (concatenate) into a new resulting pandas dataframe. The net result is a very slick and short routine for category encoding our clean SuperTypes: import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master.csv') out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_cleanst.csv' df_2 = pd.concat(, axis=1) df_2.drop('Unnamed: 0', axis=1, inplace=True) df_2.to_csv(out_f, encoding='utf-8') print('Categories one-hot encoded.') print('File written and closed.') The result of this routine can be seen in its get_dummies dataframe: import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_1.csv')df.info()

df

We will work in the idea of sklearn pipelines at the end of this installment and the beginning of the next, which argues for keeping everything within this environment for grid search and other preprocessing and consistency tasks. But, we are also looking for the simplest methods and will also be using master files to drive a host of subsequent tasks. In this regard, we are not solely focused on keeping the analysis pipeline totally within scikit-learn, but establishing robust starting points for a diversity of machine learning platforms. In this regard, the use of the get_dummies approach may make some sense. 11. FILL IN MISSING VALUES and 12. OTHER CATEGORICAL TEXT (VOCABULARY) ENCODE scikit-learn machine learners do not work on unbalanced datasets. If one column has x number of items, other comparative columns need to have the same. One can pare down the training sets to the lowest common number, or one may provide estimates or ‘fill-ins’ for the open items. Helpfully, sklearn has a number of useful utilitiesincluding

imputation for filling in missing values. Since, in the case of our KBpedia master, all missing values relate to text (especially for the altLabels category), we can simply assign a blank space as our fill in using standard pandas utilities.

However, one can also do replacements, conditional fill-ins, averages and the like depending on circumstances. If you have missing data, you should consult the package documentation. In our code

example below (see note (1)), however, we limit ourselves to the filling in with blank spaces. There are a number of preprocessing encoding methods in sklearn useful to text, including CountVectorizer,

HashingVectorizer

,

TfidfTransformer

,

and TfidfVectorizer

.

The CountVectorizer creates a matrix of text tokens and their counts. The HashingVectorizer uses the hash trickto generate unique

numbers for text tokens with the least amount of memory but no ability to later look up the contributing tokens. The TfidfVectorizer calculates both both term frequency and inverse document frequency, while the TfidfTransformer first requires the CountVectorizer to calculate term frequency. The fields we need to encode include the prefLabel, the id, the subClassOf (parents), and the superClassOf (children) entries. The latter three all are based on the same listing of 58 K KBpedia reference concepts (RCs). The prefLabel has major overlap with these RCs, but the terms are not concatenated and some synonyms and other qualifiers appear in the list. Nonetheless, because there is a great deal of overlap in all four of these fields, it appears best that we use a combined vocabulary across all four fields. The term frequency/inverse document frequency (TF/IDF ) method is a proven statistical way to indicate the importance of a term in relation to an entire corpus. Though we are dealing with a limited vocabulary for our RCs, some RCs are more often in relationships with other RCs and some RCs are more frequently used than others. While the other methods would give us our needed numerical encoding, we first pick TF/IDF to test because it appears to retain the most useful information in itsencoding.

After taking care of missing items, we want our coding routine, then, to construct a common vocabulary across our four subject text fields. This common vocabulary and its frequency counts is what we will use to calculate the document frequencies across all four columns. For this purpose we will use the CountVectorizer method. Then, for each of the four individual columns that comprise this vocabulary we will use the TfidfTransformer method to get the term frequencies for each entry and to construct its overall TF/IDF scores. We will need to construct this routine using the ColumnTransformer method described above. There are many choices and nuances to learn with this approach. While doing so, I came to realize some significant downfalls. The number of unique tokens across all four columns is about 80,000. When parsed against the 58 K RCs it results in a huge matrix, but a very sparse one. There is an average of about four tokens across all four columns for each RC, and rarely does an RC have more than seven. This unusual profile, though, does make sense since we are dealing with a controlled vocabulary of 58 K tokens for three of the columns, and close matches and synonyms with some type designators for the prefLabel field. So, anytime our routines needed to access information in one of these four columns, we would incur a very large memory penalty. Since memory is a limiting factor in all cases, but especially so with my office-grade equipment, this is a heavy anchor to be dragging into the picture. Our first need to create a shared vocabulary across all four input columns brought the first insight to bypass this large matrix conundrum. The CountVectorizer produces a tuple listing index and unique token ID. The token ID can be linked back to its text key, and the sequence of the token IDs is in text alphabetical order. Rather than needing to capture a large sparse matrix, we only need to record the IDs for the few matching terms. What is really cool about this structure is that we can reduce our memory demand by more than 14,000 times while being fully lossless with lookup to the actual text. Further, this vocabulary structure can be saved separately and incorporated in other learners and utilities. So, our initial routine lays out how we can combine the contents of our four columns, which then becomes the basis for fitting the TfidfVectorizer. (The dictionary creation part of this routine is based on the TfidfVectorizer, so either may be used.) Let’s first look at this structure derived from our KBpedia master file: # Concatenation code adapted from https://github.com/scikit-learn/scikit-learn/issues/16148 import pandas as pd from sklearn.feature_extraction.text import TfidfVectorizer df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master.csv') df = df.fillna(' ') # Note 1 df = df.fillna(' ') def concat_cols(df): assert len(df.columns) >= 2 res = df.iloc.astype('str') for key in df.columns: res = res + ' ' + dfreturn res

tf = TfidfVectorizer().fit(concat_cols(df)) print(tf.vocabulary_) This gives us a vocabulary and then a lookup basis for linking our individual columns in this group of four. Note that some of these terms are quite long, since they are the product of an already concatenated identifier creation. That also makes them very strong signals for possible text matches. Nearly all of these types of machine learners first require the datato be ‘fit

‘ and then

‘transformed

‘. Fit

means to make a new numeric representation for the input data including any format or validation checks. Transform means to convert that new representation to a form most useful to the learner at hand, such as a floating decimal for a frequency value, that may also be the result of some conversion or algorithmic changes. Each machine learner has its own approaches to fit and transform, and parameters that may be set when these functions are called may tweak methods further. These approaches may be combined together into a slighly more efficient ‘fit-transform‘

step, which is the approach we take in this example:import csv

token_gen = make_column_transformer( (TfidfVectorizer(vocabulary=tf.vocabulary_),'prefLabel'))

tfidf_array = token_gen.fit_transform(df)print(tfidf_array)

So, we surmise, then, we can loop over these results sets for each of the four columns, loop over matching token IDs for each unique ID, and then to write out a simple array for each RC entry. In the case of id there will be a single matching token ID. For the other three columns, there is at most a few entries. This promises to provide a very efficient encoding that we can also tie into external routines asappropriate.

Alas, like much else that appears simple on the face of it, what one sees when printing this output is not the data form presented when saving it to file. For example, if one does a type(tfidf-array) we see that the object is actually a pretty complicated data structure, a scipy.sparse.csr_matrix.

(scikit-learn is itself based on SciPy , which is itself based on NumPy .) We get hints we might be working with a different data structure when we see print statements that produce truncated listings in terms of rows and columns. We can not do our typical tricks on this structure, like converting it to a string or list, prior to standard string processing. What we first need to do is to get it into a manipulable form, such as a pandas CSV form. We need to do this for each of the four columns separately: # Adapted from https://www.biostars.org/p/452028/ import pandas as pd from sklearn.feature_extraction.text import TfidfVectorizer from sklearn.compose import make_column_transformer import numpy as np from scipy.sparse import csr_matrix df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master.csv') out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_preflabel.csv' out_file = open(out_f, 'w', encoding='utf8')#cols =

cols =

df = df.fillna(' ') df = df.fillna(' ') def concat_cols(df): assert len(df.columns) >= 2 res = df.iloc.astype('str') for key in df.columns: res = res + ' ' + dfreturn res

tf = TfidfVectorizer().fit(concat_cols(df)) for c in cols: # Using 'prefLabel' as our examplec_label = str(c)

print(c_label)

token_gen = make_column_transformer( (TfidfVectorizer(vocabulary=tf.vocabulary_),c_label))

tokens = token_gen.fit_transform(df)print(tokens)

df_2 = pd.DataFrame(data=tokens) df_2.to_csv(out_f, encoding='utf-8') print('File written and closed.') Here is a sample of what such a file output looks like: 0," (0, 66038) 0.6551037573323365 (0, 42860) 0.7555389249595651" 1," (0, 75910) 1.0" 2," (0, 50502) 1.0" 3," (0, 55394) 0.7704414465093152 (0, 53041) 0.637510766576247" 4," (0, 75414) 0.4035084561691855 (0, 35644) 0.5053985169471683 (0, 13178) 0.47182153985726 (0, 9754) 0.5992809853435092" 5," (0, 50446) 0.7232652656552668 (0, 5844) 0.6905703117689149" 6," (0, 41964) 0.5266799956122452 (0, 12319) 0.8500636342191596" 7," (0, 67750) 0.7206261499559882 (0, 47278) 0.45256791509595035 (0, 27998) 0.5252430239663488" Inspection of the scipy.sparse.csr_matrix files shows that the frequency values are separated from the index and key by a tab separator, with sometimes multiples of values. This form can certainly be processed with Python, but we can also open the files as tab-delimited in a local spreadsheet, and then delete the frequency column to get a much simpler form to wrangle. Since this only takes a few minutes, we take this path. This is the basis, then, that we need to clean up for our “simple” vectors, reflected in this generic routine, and we slightly change our file names to account for the difference: import pandas as pdimport csv

import re # If we want to use regex; we don't here in_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_superclassof.csv' out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_superclassof_ok.csv' #out_file = open(out_f, 'w', encoding='utf8')cols =

with open(in_f, 'r', encoding = 'utf8') as infile, open(out_f, 'w', encoding='utf8', newline='') as outfile: reader = csv.reader(infile) writer = csv.writer(outfile, delimiter=' ', quoting=csv.QUOTE_NONE, escapechar='\\')new_row =

for row in reader:row = str(row)

row = row.replace('"', '') row = row.replace(", (0, ", "','") row = row.replace("' (0', ' ", "@'") row = row.replace(")", "") row = str(row) # A nifty for removing square brackets around a list new_row.append(row) # Transition here because we need to find-replace across rows new_row = str(new_row) new_row = new_row.replace('"', '') # Notice how bracketing quotes change depending on target text new_row = new_row.replace("', @'", ",") # Notice how bracketing quotes change depending on target text new_row = new_row.replace("', '", "'\n'") new_row = new_row.replace("''\n", "")print(new_row)

writer.writerow()

print('Matrix conversion complete.') The basic approach is to baby step a change, review the output, and plan the next substitution. It is a hack, but it is also pretty remarkable to be able to bridge such disparate data structures. The benefits from my attempts with Python are now really paying off. I’ll always be interested in figuring out more efficient ways. But the entry point to doing so is getting real stuff done. Since our major focus here is on data wrangling, known as feature

engineering in a machine learning context, it is not enough to write these routines and cursorily test outputs. Though not needed for every intermediate step, after sufficiently accumulating changes and interim files, it is advisable to visually inspect your current work product. Automated routines can easily mask edge cases that are wrong. Since we are nearly at the point of committing to the file vector representations our learners will work from, this marks a good time to manually confirm results. In inspecting the kbpedia_master_preflabel_ok.csv example, I found about 25 of the 58 K reference concepts with either a format or representation problem. That inspection by scrolling through the entire listing looking for visual triggers took about thirty to forty minutes. Granted, those errors are less than 0.05% of our population, but they are errors nonetheless. The inspection of the first 5 instances in comparison to the master file (using the common id) took another 10 minutes or so. That led me to the hypothesis that periods (‘.’) caused labels to be skipped and other issues related to individual character or symbol use. The actual inspection and correction of these items took perhaps another thirty to forty minutes; about 40 percent were due to periods, the others to specific symbols or characters. The id file checked out well. That took just a few minutes to fast scroll through the listing looking for visual flags. Another ten to fifteen minutes showed the subClassOf to check out as well. This file took a bit longer because every reference concept has at least oneparent.

However, when checking the superClassOf file I turned up more than 100 errors. More than the other files, it took about forty minutes to check and record the errors. I feared checking these instances to resolution would take tremendous time, but as I began inspecting my initial sample all were returning as extremely long fields. Indeed, the problem that I had been seeing that caused me to flag the entry in the intial review was a colon (‘:’) in the listing, a conventional indicator in Python for a truncated field or listing. The introduced colon was the apparent cause of the problem in all concepts I sampled. I determined the likely maximum length of SuperClass entries to be about 240 characters. Fortunately, we already have a script in step 8. FINAL TEXT REVISIONS to shorten fields. We clearly overlooked those 100 instances where SuperClass entries are too long. We add this filter at STEP 8 and begin to proceed to cycle through all of the routines from that point forward. It took about an hour to repeat all steps forward from there. This case validates why committing to scripts is sound practice. 13. AN ALTERNATE TF/IDF ENCODE Our earlier experience with TF-IDF and CountVectorizer was not the easiest to set up. I wanted to see if there were perhaps better or easier ways to conduct a TF-IDF analysis. We still had the definition and altLabel columns to encode. In my initial diligence I had come across a wrapper around many interesting text functions. It is called Texthero and it provides a consistentinterface over NLTK

, SpaCy,

Gensim, TextBlob and sklearn. It provides common text wrangling utilities, NLP tools, vector representations, and results visualization. If your problem area falls within the scope of this tool, you will be rewarded with a very straightforward interface. However, if you need to tweak parameters or modify what comes out of the box, Texthero is likely notthe tool for you.

Since TF-IDF is one of its built-in capabilities, we can show the simple approach available: import pandas as pd import texthero as hero df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_definition_bigram.csv') out_f = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_definition.csv' #df = df.fillna(' ')texts = df

df = hero.tfidf(texts, min_df=1, max_features=1000) df.drop('Unnamed: 0', axis=1, inplace=True)df = df

df.to_csv(out_f, encoding='utf-8') print('TF/IDF calculations completed.') print('File written and closed.') TF/IDF calculations completed. File written and closed. We apply this code to both the altLabel and definition fields with different parameters: (df=1, 500 features) and (df=3, 1000 features) for these fields, respectively. Since these routines produce large files that can not be easily viewed, we write them out with only the frequencies and the id field as the mapping key. 14. PREPARE DOC2VEC VECTORS FROM PAGES FOR MASTER We discussed word and document embedding in the previous installment. We’re attempting to capture a vector representation of the Wikipedia page descriptions for about 31 K of the 58 K reference concepts (RCs) in current KBpedia. We found doc2vecto be a

promising approach. Our prior installment had us representing our documents with about 1000 features. We will retain these earlier settings and build on last results. We have earlier built our model, so we need to load it, read in our source files, and calculate our doc2vec values per input line, that is, per RC with a Wikipedia article. To also output strings readable by the next step in the pipeline, we also need to do some formatting changes, including find and replaces for line feeds and carriage returns. As we process each line, we append it to an array (which becomes a list in Python) that will update our initial records with the new calculated vectors into a new column (‘doc2vec’): from gensim.models.doc2vec import Doc2Vec, TaggedLineDocument import pandas as pd in_f = r'C:\1-PythonProjects\kbpedia\v300\models\inputs\kbpedia-pages.csv' out_f = r'C:\1-PythonProjects\kbpedia\v300\models\inputs\kbpedia-d2v.csv' df = pd.read_csv(in_f, header=0, usecols=) src = r'C:\1-PythonProjects\kbpedia\v300\models\results\wikipedia-d2v.model' model = Doc2Vec.load(src)doc2vec =

# For each row in df.idfor i in df:

array = model.docvecs array = str(array) array = array.replace('\r', '') array = array.replace('\n', '') array = array.replace(' ', ' ') array = array.replace(' ', ' ')count = i + 1

doc2vec.append(array)df = doc2vec

df.to_csv(out_f)

print('Processing done with ', count, 'records') One of the things we notice as we process this information is that I have been inconsistent in the use of ‘id’, especially since it has emerged to be the primary lookup key. Looking over the efforts to date I see that sometimes ‘id’ is the numeric sequence ID, sometimes it is the unique URI fragment (the standard within KBpedia), and sometimes it is a Wikipedia URI fragment with underscores for spaces. The latter is the basis for the existing doc2vec files. Conformance with the original sense of ‘id’ means to use the URI fragment that uniquely identifies each reference concept (RC) in KBpedia. This is the common sense I want to enforce. It has to be unique within KBpedia, and therefore is a key that any contributing file should reference in order to bring its information into the system. I need to clean up the sloppiness. This need for consistency forces me to use the merge steps noted under STEP 5 above to map the canonical ‘id’ (the KBpedia URI fragment) to the Wikipedia IDs used in our mappings. Again, scripts are our friend and we are able to bring this pages file into compliancewithout problems.

After this replacing, we have now completed the representation of our input information into machine learner form. It is time for us to create the vector lookup file. CONSOLIDATE A NEW VECTOR MASTER FILE OK, we now have successfully converted all categorical and text and non-numeric information into numeric forms readable by our learners. We need to consolidate this information since it will be the starting basis for our next machine learning efforts. In a production setting, all of these individual steps and scripts would be better organized into formal programs and routines, best embedded within the the pipeline construct of your choice (though having diverse scopes, gensim, sklearn, and spaCy all have pipelinecapabilities).

To best stage our machine learning tests to come, I decide to create a parallel master file, only this one using vectors rather than text or categories as its column contents. We want the column structure to roughly parallel the text structure, and we also decide to keep the page information separate (but readily incorporable) to keep general file sizes manageable. Again, we use id as our master lookup key, specifically referring to the unique URI fragment for each KBpedia RC. 15. MERGE PAGE VECTORS INTO MASTER Under STEP 5 above we identified and wrote a code block for merging information from two different files based on the lookup concurrence of keys between the source and target. Care is appropriately required that key matches and cardinality be respected. It is important to be sure the matching keys from different files have the right reference and format to indeed match. Since we like the idea of a master ‘accumulating’ file to which all contributed files map, we use the left join method of the inner merge we first described under STEP 5, continually using the master ‘accumulating’ file as our left join basis. We name the vector version of our master file kbpedia_master_vec.csv, and we proceed to merge all prior files with vectors into it. (We will not immediately merge the doc2vec pages file since it is quite large; we will only do this merge as needed for the later analysis.) As we’ve noted before, we want to proceed in column order from left to right based on our column order in the earlier master. Here is the general routine with some commented out lines used on occasion to clean up the columns to be kept or their ordering: import pandas as pd file_a = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_vec.csv' file_b = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_cleanst.csv' file_out = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_vec1.csv' df_a = pd.read_csv(file_a) df_b = pd.read_csv(file_b, engine='python', encoding='utf-8', error_bad_lines=False) merged_inner = pd.merge(left=df_a, right=df_b, how='outer', left_on='id_x', right_on='id') # Since both sources share the 'id' column name, we could skip the 'left_on' and 'right_on' parameters # What's the size of the output data?merged_inner.shape

merged_inner

merged_inner.to_csv(file_out) print('Merge complete.')Merge complete.

After each merge, we remove the extraneous columns by writing to file the columns we want to keep. We can also directly drop columns and do other activities such as rename. We may also need to change the datatype of a column because of default parameters in the pandasroutines.

The code block below shows some of these actions, with others commented out. The basic workflow, then, is to invoke the next file, make sure our merge conditions are appropriate to the two files undergoing a merge, save to file with a different file name, inspect those results, and make further changes until the merge is clean. Then, we move on to the next item requiring incorporation and rinseand repeat.

import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_vec1.csv') file_out = r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_vec.csv'df = df

#df.rename(columns={'id_x': 'id'}) #df.drop('Unnamed: 0', axis=1, inplace=True) #df.drop('Unnamed: 0.1', axis=1, inplace=True)df.info()

df.to_csv(file_out

print('File written.')df

We can also readily inspect the before and after files to make sure we are getting the results we expect: import pandas as pd df = pd.read_csv(r'C:/1-PythonProjects/kbpedia/v300/models/inputs/kbpedia_master_vec.csv')df.info()

df

It is important to inspect results as this process unfolds and to check each run to make sure the number of records has not grown. If it does grow, that is due to problems in the input files of some manner that is causing the merges not to be clean, which can add more rows (records) to the merged entity. For example, one problem I found were duplicate reference concepts (RCs) that varied because of differences in capitalization (especially when merging on the basis of the KBpedia URI fragments). That caused me to reach back quite a few steps to correct the input problem. I have also flagged doing a more thorough check for nomimal duplicates for the next version release of KBpedia. Other items causing processing problems may include punctuation or errors due to such in earlier processing steps. One of the reasons I kept the id field from both files in the first step of these incremental merges was to have a readable basis for checking proper registry and to identify possible problem concepts. Once the checks were complete, I could delete the extraneous id column. The result of this incremental merging and assembly was to create the final kbpedia_master_vec.csv file, which we will have much occasion to discuss in next installments. The column structure of this finalvector file is:

id id_token pref_token sub_token count super_token alt_tfidf def_tfidf st_AVInfo st_ActionTypes plus 66 more STsNOW READY FOR USE

We are now ready to move to the use of scikit-learn for real applications, which we address in the next CWPK installment. ADDITIONAL DOCUMENTATION Here is some additional documentation the provides background to today’s installment. * 50 tips for sklearn * Scikit-learn Tutorial: Machine Learning in Pythonis an

excellent starting point to gain a basic understanding * skorch is a scikit-learn compatible neural network library that wraps PyTorch and has scoring routines, perhaps applicable across the board* Category Encoders

– a set

of scikit-learn-style transformers for encoding categorical variables into numeric by means of different techniques; encoding of category orlists functions

* The preprocessing module provides means to get to some level of standardized input, using scalings and transformations among other techniques* Model Evaluation

provides an impressive number of scoring parameters and metrics * pandas and scikit-learn * Lesson 4. Introduction to List Comprehensions in Python: Write More Efficient Loops,

and

* Curse of Dimensionality.

NOTE: This article is part of the Cooking with Python and KBpediaseries.

See the CWPK listingfor other

articles in the series. KBpedia has its own Web site. The _cowpoke_ Python code listing covering the series is also available from GitHub. NOTE: This CWPK INSTALLMENT is available both as an online interactive file or as a direct download to use locally. Make sure and pick the correct installment number. For the online interactive option, pick the *.ipynb file. It may take a bit of time for the interactive option to load. I am at best an amateur with Python. There are likely more efficient methods for coding these steps than what I provide. I encourage you to experiment — which is part of the fun of Python — and to notify me should you make improvements. Posted by AI3's author, MIKE BERGMAN Posted on November 23, 2020 at 9:38 am in CWPK, KBpedia

, Semantic Web Tools| Comments

(0)

> The URI link reference to this post is: > HTTPS://WWW.MKBERGMAN.COM/2418/CWPK-65-SCIKIT-LEARN-BASICS-AND-INITIAL-ENCODING/ > The URI to trackback this post is: > HTTPS://WWW.MKBERGMAN.COM/2418/CWPK-65-SCIKIT-LEARN-BASICS-AND-INITIAL-ENCODING/TRACKBACK/ POSTED:NOVEMBER 12, 2020 CWPK #64: EMBEDDINGS, SUMMARIZATION AND ENTITY RECOGNITION SOME MACHINE LEARNING APPLIED TO NLP PROBLEMS In the last installment of _Cooking with Python and KBpedia_we

collected roughly 45,000 articles from Wikipedia that match KBpedia reference concepts. We then did some pre-processing of the text using the gensim package to lower case the text, remove stop words, and identify bi-gram and tri-gram phrases. These types of functions and extractions are a strength of gensim, which should be part of your pre-processing arsenal. It is now time for us to process the natural language of this text and to use it for creating word and document embedding models. For the later, we will continue to use gensim. For the former, however, we will introduce and use a very powerful NLP package, spaCy . As noted earlier, spaCy has a clear function orientation to NLP and also has some powerful extension mechanisms. Our plan of attack in this installment is to finish the word embeddings with gensim, and then move on to explore the spaCy package. We will not explore all aspects of this package, but will focus ontext summarization

, and (named)

entity recognition

using both

models and rule-based. WORD AND DOCUMENT EMBEDDING As we have noted in CWPK #61,

there exist many pre-calculated word and document embedding models. However, because of the clean scope of KBpedia and our interest in manipulating embeddings for various learning models, we want to createour own embeddings.

There are many scopes and methods for creating embeddings. Embeddings, you recall, are a vector representation of information in a reduced dimensional space. Embeddings are a proven way to represent sparse matrix information like language (meaning many dimensions of words and phrases matched to one another) in a more efficient coding format usable by a computer. Embedding scopes may range from words, phrases, sentences, paragraphs, sections of documents, or documents, as well as

senses , topics

, sentiments, categories or other relations that might cut across a given corpus. Methods may range from sequences to counts to simple statistics or all the way up to deep learning with neural nets. Of late, a combination method of converging encoders and decoders called ‘transformers’ has been the rage, with BERTand ELMo

two prominent instantiations. Because we already have been exercising the gensim package, we decide to proceed with our own word embedding and document embedding models. From gensim documentation, we first prepare up a word2vecmodel:

NOTE: Due to GitHub’s file size limits, the various text file inputs referenced in this installment may be found on the KBpedia site as zipped files (for example, https://kbpedia.org/cwpk-text/wikipedia-trigram.zip for the input file mentioned next). Due to their very large sizes, you will need to create locally all of the models mentioned in this installment (with *.vec or *.model extensions).import sys

from gensim.models import Word2Vec from gensim.models.word2vec import LineSentence from smart_open import smart_open in_f = r'C:\1-PythonProjects\kbpedia\v300\models\inputs\wikipedia-trigram.txt' out_model = r'C:\1-PythonProjects\kbpedia\v300\models\results\wikipedia-w2v.model' out_vec = r'C:\1-PythonProjects\kbpedia\v300\models\results\wikipedia-w2v.vec' input = smart_open(in_f, 'r', encoding='utf-8') walks = LineSentence(input) model = Word2Vec(walks, size=300, window=5, min_count=3, hs=1, sg=1, workers=5, negative=5, iter=10) model.save(out_model) model.wv.save_word2vec_format(out_vec, binary=False) This works pretty slick and only requires a few lines of code. The model run takes about 2:15 hrs on my laptop; to process the entire Wikipedia English corpus reportedly takes about 9 hrs. Note we begin the training process with our tri-gram input corpus created in thelast installment.

A few of the model parameters deserve some commentary. The size parameter is one of the most important. It sets the number of dimensions over which you want to capture a correspondence statistic, what is the actual dimension reductionat the core

of the entire exercise. Remember, a collocation matrix is a very sparse one for natural language. In the case of how I have set up the Wikipedia pages from KBpedia so far with stoplist and trigrams and such, our current corpus has 1.3 million tokens, which is really sparse when you extend the second dimension by this same amount. The size parameter beyond hundreds of dimensions works to greatly increase the computation time in training as well as (perhaps paradoxically) lowering accuracy. The window parameter is the word count to either side of the current token for which adjacency is calculated, so that a window of five actually encompasses a string of eleven tokens, the subject token and five to either side. min-counts is the minimum number of occurrences for a given token (including phrases or ngrams as individual tokens). sg in this case is invoking the ‘skip-gram’ method as opposed to the second method more commonly used, the ‘cbow’ (continuous bag of words) method. Like any central Python function, you should study this one to learn more about some of the other settable parameters. What is most important, however, is to learn about these settings, test those you deem critical, and realize fine-tuning such parameters is likely the key to successful results with your machine learning efforts. It is a common secret that success with machine learning is dependent on setting up and then tweaking the parameters that go into anyparticular method.

We can take this same code block above and set up the doc2vecmethod:

import sys

from gensim.models.doc2vec import Doc2Vec, TaggedLineDocument from gensim.models import Word2Vec from smart_open import smart_open in_f = r'C:\1-PythonProjects\kbpedia\v300\models\inputs\wikipedia-trigram.txt' out_model = r'C:\1-PythonProjects\kbpedia\v300\models\results\wikipedia-d2v.model' out_vec = r'C:\1-PythonProjects\kbpedia\v300\models\results\wikipedia-d2v.vec' input = smart_open(in_f, 'r', encoding='utf-8') documents = TaggedLineDocument(input) training_src = list(documents)print(training_src)

model = Doc2Vec(vector_size=300, min_count=15, epochs=30) model.build_vocab(training_src) model.train(training_src, total_examples=model.corpus_count, epochs=model.epochs) model.save(out_model) model.save_word2vec_format(out_vec, binary=False) print(model.infer_vector()) The doc2vec method has a similar setup. The main difference is that the vector calculation is now based on full sentences versus individual words. We also increase the min_count parameter. We’ll see the results of this training in the next section. gensim also has methods to train FastText. Please consult

the documentation for this method as well as to understand better the various training parameters.SIMILARITY ANALYSIS

A good way to see the effect of embedding vectors is through similarity analysis. The calculations are based on the adjacency of vectors in the embedding space. Our first two examples use word2vec for our newly created KBpedia-Wikipedia corpus. The first example calculates the relatedness between two entered terms: from gensim.models import Word2Vec path = r'C:\1-PythonProjects\kbpedia\v300\models\results\wikipedia-w2v.model' model = Word2Vec.load(path) model.wv.similarity('man', 'woman')0.6882485

The second example retrieves the most closely related terms given an input term or phrase (in this case, machine_learning: from gensim.models import Word2Vec path = r'C:\1-PythonProjects\kbpedia\v300\models\results\wikipedia-w2v.model' model = Word2Vec.load(path)w1 =

model.wv.most_similar(positive=w1, topn=6) gensim offers a number of settings including whether one can analyze without training (effectively a ‘read only’ option) and other parameters including number of results returned, etc. We can also compare the doc2vec approach in comparison to word2vec: from gensim.models import Doc2Vec path = r'C:\1-PythonProjects\kbpedia\v300\models\results\wikipedia-d2v.model' model = Doc2Vec.load(path)w1 =

model.wv.most_similar(positive=w1, topn=6) Note we get a similar listing of results, though the correlation scores in this doc2vec case are much lower. These efforts conclude our embedding tests for the moment. We will be adding additional embeddings based on knowledge graph STRUCTURE and ANNOTATIONS in CWPK #67.TEXT SUMMARIZATION

Let’s now switch gears and introduce our basic natural language processing package, spaCy. Out-of-the-box spaCy includes the standardNLP utilities of

part-of-speech tagging, lemmatization, dependency parsing, named entity recognition, entity linking, tokenization, merging and splitting, and sentence segmentation. Various vector embedding or rule-based processing methods may be layered on top of these utilities, and they may be combined into flexible NLP processingpipelines.

We are not doing anything special here, but I wanted to include text summarization because it nicely combines many functions and utilities provided by the spaCy package. Here is an example using an existing spaCy model, en_core_web_sm, which has pre-calculated POS and NER tags based on the English OntoNotes 5 corpus. (You will need to separately download and install these existing models.) The text to be evaluated was copied-and-pasted from CWPK #61:

import spacy