Are you over 18 and want to see adult content?

More Annotations

A complete backup of haltern-am-see.de

Are you over 18 and want to see adult content?

A complete backup of cwilsonmeloncelli.com

Are you over 18 and want to see adult content?

A complete backup of bloggingheads.tv

Are you over 18 and want to see adult content?

A complete backup of feedbackcompany.nl

Are you over 18 and want to see adult content?

Favourite Annotations

A complete backup of https://uok.edu.in

Are you over 18 and want to see adult content?

A complete backup of https://golfbrowsing.com

Are you over 18 and want to see adult content?

A complete backup of https://caneurope.org

Are you over 18 and want to see adult content?

A complete backup of https://alternativephotography.com

Are you over 18 and want to see adult content?

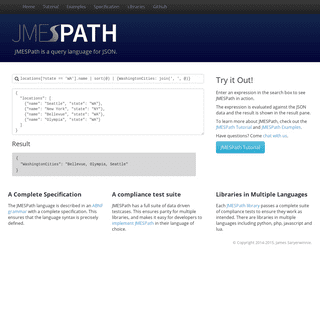

A complete backup of https://jmespath.org

Are you over 18 and want to see adult content?

A complete backup of https://pm-casinos.net

Are you over 18 and want to see adult content?

A complete backup of https://dmba.com

Are you over 18 and want to see adult content?

A complete backup of https://andalucialab.org

Are you over 18 and want to see adult content?

A complete backup of https://pathmatics.com

Are you over 18 and want to see adult content?

A complete backup of https://chasingtails.store

Are you over 18 and want to see adult content?

A complete backup of https://connellfoley.com

Are you over 18 and want to see adult content?

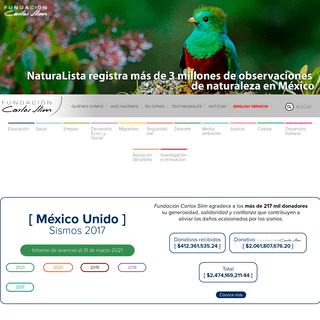

A complete backup of https://fundacioncarlosslim.org

Are you over 18 and want to see adult content?

Text

WEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production.HOME

Weights & Biases, developer tools for machine learning REPRODUCIBILITY CHALLENGE For Deep Convolutional Neural Networks, ML Reproducibility Challenge 2020. W&B: A Reproducibility (Research) Perspective. How Weights & Biases optimised my attempt for the ML Reproducibility Challenge 2020. Reformer Reproducibility. Fast.ai community submission to the Reproducibility Challenge 2020. Rigging the Lottery. Making alltickets winners.

RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss). ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toWEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production.HOME

Weights & Biases, developer tools for machine learning REPRODUCIBILITY CHALLENGE For Deep Convolutional Neural Networks, ML Reproducibility Challenge 2020. W&B: A Reproducibility (Research) Perspective. How Weights & Biases optimised my attempt for the ML Reproducibility Challenge 2020. Reformer Reproducibility. Fast.ai community submission to the Reproducibility Challenge 2020. Rigging the Lottery. Making alltickets winners.

RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss). ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed to ENTERPRISE - WANDB.AI W&B offers scalable tools for managing growing machine learning teams, including solutions governance, data provenance, and data security.Use Weights &

HOME

Weights & Biases, developer tools for machine learningTWO MINUTE PAPERS

Try our free tools for experiment tracking to easily visualize all your experiments in one place, compare results, and share findings. If you're doing machine learning, I FUNDAMENTALS OF NEURAL NETWORKS ON WEIGHTS & BIASES Around 2^n (where n is the number of neurons in the architecture) slightly-unique neural networks are generated during the training process, and ensembled together to make predictions. A good dropout rate is between 0.1 to 0.5; 0.3 for RNNs, and 0.5 for CNNs. Use larger rates for bigger layers. INTRODUCTION TO CONVOLUTIONAL NEURAL NETWORKS WITH WEIGHTS Introduction to Convolutional Neural Networks with Weights & Biases. In this tutorial we'll walk through a simple convolutional neural network to classify the images in the cifar10 dataset. You can find the accompanying code here. We highly encourage you to fork this notebook, tweak the parameters, or try the model with your owndataset!

REPRODUCIBILITY CHALLENGE At W&B, we strongly believe that research should be reproducible and accessible, so we’re excited to support people participating in the Reproducibility Challenge. We believe this initiative is very important, and we’re happy to do what we can to supportparticipants.

INTRO TO KERAS WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Welcome! In this tutorial we'll walk through a simple convolutional neural network to classify the images in the Simpson dataset using Keras. We’ll also set up Weights & Biases to log models metrics, inspect performance and share findings about the best architecture for the network. In this example we're using Google Colab as a convenient hosted environment, but you can run your own trainingWANDB DOCKER

Summary. W&B docker lets you run your code in a docker image ensuring wandb is configured. It adds the WANDB_DOCKER and WANDB_API_KEY environment variables to your container and mounts the current directory in /app by default. You can pass additional args which will be added to docker run before the image name is declared, we'll choosea

PROJECT PAGE

Project Page. Compare versions of your model, explore results in a scratch workspace, and export findings to a report to save notes and visualizations. The project Workspace gives you a personal sandbox to compare experiments. Use projects to organize models that can be compared, working on the same problem with different architectures THE REALITY BEHIND THE OPTIMIZATION OF IMAGINARY VARIABLES Exploring the capabilities of neural networks to map the imaginary loss landscape and studying their applications in modern research.WEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production. RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss).WANDB DOCKER

Summary. W&B docker lets you run your code in a docker image ensuring wandb is configured. It adds the WANDB_DOCKER and WANDB_API_KEY environment variables to your container and mounts the current directory in /app by default. You can pass additional args which will be added to docker run before the image name is declared, we'll choosea

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toWEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production. RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss).WANDB DOCKER

Summary. W&B docker lets you run your code in a docker image ensuring wandb is configured. It adds the WANDB_DOCKER and WANDB_API_KEY environment variables to your container and mounts the current directory in /app by default. You can pass additional args which will be added to docker run before the image name is declared, we'll choosea

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toTWO MINUTE PAPERS

Try our free tools for experiment tracking to easily visualize all your experiments in one place, compare results, and share findings. If you're doing machine learning, I FUNDAMENTALS OF NEURAL NETWORKS ON WEIGHTS & BIASES Around 2^n (where n is the number of neurons in the architecture) slightly-unique neural networks are generated during the training process, and ensembled together to make predictions. A good dropout rate is between 0.1 to 0.5; 0.3 for RNNs, and 0.5 for CNNs. Use larger rates for bigger layers. INTRODUCTION TO CONVOLUTIONAL NEURAL NETWORKS WITH WEIGHTS Introduction to Convolutional Neural Networks with Weights & Biases. In this tutorial we'll walk through a simple convolutional neural network to classify the images in the cifar10 dataset. You can find the accompanying code here. We highly encourage you to fork this notebook, tweak the parameters, or try the model with your owndataset!

FULLY CONNECTED: FASTAI Our collection of posts that leverage W&B and the fastai deep learning library, all in one handy place INTRO TO KERAS WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Welcome! In this tutorial we'll walk through a simple convolutional neural network to classify the images in the Simpson dataset using Keras. We’ll also set up Weights & Biases to log models metrics, inspect performance and share findings about the best architecture for the network. In this example we're using Google Colab as a convenient hosted environment, but you can run your own trainingAUTHORIZE - W&B

Weights & Biases, developer tools for machine learning RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON Typical Hyperparameters in Neural Network Architecture - Source Hyperparameter Sweeps organize search in a very elegant way, allowing us to: Set up hyperparameter searches using declarative configurations; Experiment with a variety of hyperparameter tuning methods including grid search, random search, Bayesian optimization, and Hyperband; Running Hyperparameter Sweeps using PART 1 – INTRODUCTION TO GRAPH NEURAL NETWORKS WITH GATEDGCN Graph Representation Learning is the task of effectively summarizing the structure of a graph in a low dimensional embedding. With the rise of deep learning, researchers have come up with various architectures that involve the use of neural networks for graph representation learning. We call such architectures Graph Neural Networks. PYTORCH LIGHTNING WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Pytorch Lightning with Weights & Biases. PyTorch Lightning lets you decouple science code from engineering code. Try this quick tutorial to visualize Lightning models and optimize hyperparameters with an easy Weights & Biases integration. Try Pytorch Lightning THE REALITY BEHIND THE OPTIMIZATION OF IMAGINARY VARIABLES Exploring the capabilities of neural networks to map the imaginary loss landscape and studying their applications in modern research.WEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production. RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss).WEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production. RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss).WANDB DOCKER

Summary. W&B docker lets you run your code in a docker image ensuring wandb is configured. It adds the WANDB_DOCKER and WANDB_API_KEY environment variables to your container and mounts the current directory in /app by default. You can pass additional args which will be added to docker run before the image name is declared, we'll choosea

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toTWO MINUTE PAPERS

Try our free tools for experiment tracking to easily visualize all your experiments in one place, compare results, and share findings. If you're doing machine learning, I FUNDAMENTALS OF NEURAL NETWORKS ON WEIGHTS & BIASES Around 2^n (where n is the number of neurons in the architecture) slightly-unique neural networks are generated during the training process, and ensembled together to make predictions. A good dropout rate is between 0.1 to 0.5; 0.3 for RNNs, and 0.5 for CNNs. Use larger rates for bigger layers. INTRODUCTION TO CONVOLUTIONAL NEURAL NETWORKS WITH WEIGHTS Introduction to Convolutional Neural Networks with Weights & Biases. In this tutorial we'll walk through a simple convolutional neural network to classify the images in the cifar10 dataset. You can find the accompanying code here. We highly encourage you to fork this notebook, tweak the parameters, or try the model with your owndataset!

FULLY CONNECTED: FASTAI Our collection of posts that leverage W&B and the fastai deep learning library, all in one handy place INTRO TO KERAS WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Welcome! In this tutorial we'll walk through a simple convolutional neural network to classify the images in the Simpson dataset using Keras. We’ll also set up Weights & Biases to log models metrics, inspect performance and share findings about the best architecture for the network. In this example we're using Google Colab as a convenient hosted environment, but you can run your own trainingAUTHORIZE - W&B

Weights & Biases, developer tools for machine learning RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON Typical Hyperparameters in Neural Network Architecture - Source Hyperparameter Sweeps organize search in a very elegant way, allowing us to: Set up hyperparameter searches using declarative configurations; Experiment with a variety of hyperparameter tuning methods including grid search, random search, Bayesian optimization, and Hyperband; Running Hyperparameter Sweeps using PART 1 – INTRODUCTION TO GRAPH NEURAL NETWORKS WITH GATEDGCN Graph Representation Learning is the task of effectively summarizing the structure of a graph in a low dimensional embedding. With the rise of deep learning, researchers have come up with various architectures that involve the use of neural networks for graph representation learning. We call such architectures Graph Neural Networks. PYTORCH LIGHTNING WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Pytorch Lightning with Weights & Biases. PyTorch Lightning lets you decouple science code from engineering code. Try this quick tutorial to visualize Lightning models and optimize hyperparameters with an easy Weights & Biases integration. Try Pytorch Lightning THE REALITY BEHIND THE OPTIMIZATION OF IMAGINARY VARIABLES Exploring the capabilities of neural networks to map the imaginary loss landscape and studying their applications in modern research.WEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production. RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss).WANDB DOCKER

Summary. W&B docker lets you run your code in a docker image ensuring wandb is configured. It adds the WANDB_DOCKER and WANDB_API_KEY environment variables to your container and mounts the current directory in /app by default. You can pass additional args which will be added to docker run before the image name is declared, we'll choosea

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toWEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production. RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss).WANDB DOCKER

Summary. W&B docker lets you run your code in a docker image ensuring wandb is configured. It adds the WANDB_DOCKER and WANDB_API_KEY environment variables to your container and mounts the current directory in /app by default. You can pass additional args which will be added to docker run before the image name is declared, we'll choosea

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toTWO MINUTE PAPERS

Try our free tools for experiment tracking to easily visualize all your experiments in one place, compare results, and share findings. If you're doing machine learning, I FUNDAMENTALS OF NEURAL NETWORKS ON WEIGHTS & BIASES Around 2^n (where n is the number of neurons in the architecture) slightly-unique neural networks are generated during the training process, and ensembled together to make predictions. A good dropout rate is between 0.1 to 0.5; 0.3 for RNNs, and 0.5 for CNNs. Use larger rates for bigger layers. INTRODUCTION TO CONVOLUTIONAL NEURAL NETWORKS WITH WEIGHTS Introduction to Convolutional Neural Networks with Weights & Biases. In this tutorial we'll walk through a simple convolutional neural network to classify the images in the cifar10 dataset. You can find the accompanying code here. We highly encourage you to fork this notebook, tweak the parameters, or try the model with your owndataset!

FULLY CONNECTED: FASTAI Our collection of posts that leverage W&B and the fastai deep learning library, all in one handy place INTRO TO KERAS WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Welcome! In this tutorial we'll walk through a simple convolutional neural network to classify the images in the Simpson dataset using Keras. We’ll also set up Weights & Biases to log models metrics, inspect performance and share findings about the best architecture for the network. In this example we're using Google Colab as a convenient hosted environment, but you can run your own trainingAUTHORIZE - W&B

Weights & Biases, developer tools for machine learning RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON Typical Hyperparameters in Neural Network Architecture - Source Hyperparameter Sweeps organize search in a very elegant way, allowing us to: Set up hyperparameter searches using declarative configurations; Experiment with a variety of hyperparameter tuning methods including grid search, random search, Bayesian optimization, and Hyperband; Running Hyperparameter Sweeps using PART 1 – INTRODUCTION TO GRAPH NEURAL NETWORKS WITH GATEDGCN Graph Representation Learning is the task of effectively summarizing the structure of a graph in a low dimensional embedding. With the rise of deep learning, researchers have come up with various architectures that involve the use of neural networks for graph representation learning. We call such architectures Graph Neural Networks. PYTORCH LIGHTNING WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Pytorch Lightning with Weights & Biases. PyTorch Lightning lets you decouple science code from engineering code. Try this quick tutorial to visualize Lightning models and optimize hyperparameters with an easy Weights & Biases integration. Try Pytorch Lightning THE REALITY BEHIND THE OPTIMIZATION OF IMAGINARY VARIABLES Exploring the capabilities of neural networks to map the imaginary loss landscape and studying their applications in modern research.WEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production. RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss).WANDB DOCKER

Summary. W&B docker lets you run your code in a docker image ensuring wandb is configured. It adds the WANDB_DOCKER and WANDB_API_KEY environment variables to your container and mounts the current directory in /app by default. You can pass additional args which will be added to docker run before the image name is declared, we'll choosea

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toWEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING Automatically version logged datasets, with diffing and deduplication handled by Weights & Biases, behind the scenes. Click through the UI to explore the relationship between a given dataset and the models in your pipeline, or identify all the precursor steps to a model you currently have in production. RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss).WANDB DOCKER

Summary. W&B docker lets you run your code in a docker image ensuring wandb is configured. It adds the WANDB_DOCKER and WANDB_API_KEY environment variables to your container and mounts the current directory in /app by default. You can pass additional args which will be added to docker run before the image name is declared, we'll choosea

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

GET STARTED WITH TENSORFLOW LITE EXAMPLES USING ANDROID STUDIO TensorFlow Lite Examples. Now, the reason why it's so easy to get started here is that the TensorFlow Lite team actually provides us with numerous examples of working projects, including object detection, gesture recognition, pose estimation & much, much more. And trust me, that is a big deal and helps a lot with getting started.. These example projects are essentially folders with specially DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toTWO MINUTE PAPERS

Try our free tools for experiment tracking to easily visualize all your experiments in one place, compare results, and share findings. If you're doing machine learning, I FUNDAMENTALS OF NEURAL NETWORKS ON WEIGHTS & BIASES Around 2^n (where n is the number of neurons in the architecture) slightly-unique neural networks are generated during the training process, and ensembled together to make predictions. A good dropout rate is between 0.1 to 0.5; 0.3 for RNNs, and 0.5 for CNNs. Use larger rates for bigger layers. INTRODUCTION TO CONVOLUTIONAL NEURAL NETWORKS WITH WEIGHTS Introduction to Convolutional Neural Networks with Weights & Biases. In this tutorial we'll walk through a simple convolutional neural network to classify the images in the cifar10 dataset. You can find the accompanying code here. We highly encourage you to fork this notebook, tweak the parameters, or try the model with your owndataset!

FULLY CONNECTED: FASTAI Our collection of posts that leverage W&B and the fastai deep learning library, all in one handy place INTRO TO KERAS WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Welcome! In this tutorial we'll walk through a simple convolutional neural network to classify the images in the Simpson dataset using Keras. We’ll also set up Weights & Biases to log models metrics, inspect performance and share findings about the best architecture for the network. In this example we're using Google Colab as a convenient hosted environment, but you can run your own trainingAUTHORIZE - W&B

Weights & Biases, developer tools for machine learning RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON Typical Hyperparameters in Neural Network Architecture - Source Hyperparameter Sweeps organize search in a very elegant way, allowing us to: Set up hyperparameter searches using declarative configurations; Experiment with a variety of hyperparameter tuning methods including grid search, random search, Bayesian optimization, and Hyperband; Running Hyperparameter Sweeps using PART 1 – INTRODUCTION TO GRAPH NEURAL NETWORKS WITH GATEDGCN Graph Representation Learning is the task of effectively summarizing the structure of a graph in a low dimensional embedding. With the rise of deep learning, researchers have come up with various architectures that involve the use of neural networks for graph representation learning. We call such architectures Graph Neural Networks. PYTORCH LIGHTNING WITH WEIGHTS & BIASES ON WEIGHTS & BIASES Pytorch Lightning with Weights & Biases. PyTorch Lightning lets you decouple science code from engineering code. Try this quick tutorial to visualize Lightning models and optimize hyperparameters with an easy Weights & Biases integration. Try Pytorch Lightning THE REALITY BEHIND THE OPTIMIZATION OF IMAGINARY VARIABLES Exploring the capabilities of neural networks to map the imaginary loss landscape and studying their applications in modern research.WEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

INTRO TO KERAS WITH WEIGHTS & BIASES ON WEIGHTS & BIASESSEE MORE ONWANDB.AI

FULLY CONNECTED: FASTAI Our collection of posts that leverage W&B and the fastai deep learning library, all in one handy place RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss). KERAS - DOCUMENTATION Keyword argument. Default. Description. monitor. val_loss. The training metric used to measure performance for saving the best model. i.e. val_loss mode. auto 'min', 'max', or 'auto': How to compare the training metric specified in monitor between steps save_weights_only HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. IMAGE CLASSIFICATION USING PYTORCH LIGHTNING A practical introduction on how to use PyTorch Lightning to improve the readability and reproducibility of your PyTorch code. DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed toWEIGHTS & BIASES

Debug ML models. Focus your team on the hard machine learning problems. Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues. try w&B. DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

INTRO TO KERAS WITH WEIGHTS & BIASES ON WEIGHTS & BIASESSEE MORE ONWANDB.AI

FULLY CONNECTED: FASTAI Our collection of posts that leverage W&B and the fastai deep learning library, all in one handy place RUNNING HYPERPARAMETER SWEEPS TO PICK THE BEST MODEL ON How to Add a Parallel Coordinates Chart. Step 1: Click ‘Add visualization’ on the project page. Step 2: Choose the parallel coordinates plot. Step 3: Pick the dimensions (hyperparameters) you would like to visualize. Ideally, you want the last column to be the metric you are optimizing for (e.g. validation loss). KERAS - DOCUMENTATION Keyword argument. Default. Description. monitor. val_loss. The training metric used to measure performance for saving the best model. i.e. val_loss mode. auto 'min', 'max', or 'auto': How to compare the training metric specified in monitor between steps save_weights_only HYPERPARAMETER TUNING Initialize sweep: Launch the sweep server. We host this central controller and coordinate between the agents that execute the sweep. Launch agent (s): Run a single-line command on each machine you'd like to use to train models in the sweep. The agents ask the central sweep server what hyperparameters to try next, and then they execute theruns.

ONE-TO-MANY, MANY-TO-ONE AND MANY-TO-MANY LSTM EXAMPLES IN One-to-Many. One-to-many sequence problems are sequence problems where the input data has one time-step, and the output contains a vector of multiple values or multiple time-steps. Thus, we have a single input and a sequence of outputs. A typical example is image captioning, where the description of an image is generated. IMAGE CLASSIFICATION USING PYTORCH LIGHTNING A practical introduction on how to use PyTorch Lightning to improve the readability and reproducibility of your PyTorch code. DIFFERENCE BETWEEN ‘SAME’ AND ‘VALID’ PADDING IN TENSORFLOW In TensorFlow, tf.nn.max_pool performs max pooling on the input. Max pooling is used to downsample the spatial dimension of input to reduce the number of parameters and computation needed to DASHBOARD - WANDB.AI A system of record for your model results. Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to your dashboard. Fast integration. Set up your code in 5 minutes. Add a few lines to your script to start logging results. Our lightweight integration works with any Pythonscript.

HOME

Weights & Biases, developer tools for machine learning WEIGHTS & BIASES PRICING We're building developer tools for deep learning. Add a couple lines of code to your training script and we'll keep track of your hyperparameters, system metrics, and outputs so you can compare experiments, see live graphs of training, and easily share your findings with colleagues. FUNDAMENTALS OF NEURAL NETWORKS ON WEIGHTS & BIASES Around 2^n (where n is the number of neurons in the architecture) slightly-unique neural networks are generated during the training process, and ensembled together to make predictions. A good dropout rate is between 0.1 to 0.5; 0.3 for RNNs, and 0.5 for CNNs. Use larger rates for bigger layers. CONVOLUTIONAL NEURAL NETWORKS Convolutional Neural Networks. All of the work we’ve done so far applies to any data set where we can convert the input and outputs to fixed length list of numbers. But we have thrown stout some crucial information. When we out flatten that image, we lose the fact that there’s meaning in MULTI-GPU HYPERPARAMETER SWEEPS IN THREE SIMPLE STEPS ON Hyperparameter sweeps are ways to automatically test different configurations of your model. They address a wide range of needs, including running experiments with different test conditions, exploration of your dataset, or large scale tuning hyperparameters..Setting

HYPERPARAMETER TUNING FOR KERAS AND PYTORCH MODELS ON We’re excited to launch a powerful and efficient way to do hyperparameter tuning and optimization - W&B Sweeps, in both Keras and Pytoch.. With just a few lines of code Sweeps automatically search through high dimensional hyperparameter spaces to find the best REPRODUCIBILITY: DOCKER FOR MACHINE LEARNING ON WEIGHTS Docker supports reproducibility. Much has been written about the reproducibility crisis in machine learning, and the difficulty is real. Using Docker removes one major source of variability. Using Docker allows your code to continue to run painlessly in the future. When I clone a github repo of an ML experiment, I always prepare foran unknown

THE REALITY BEHIND THE OPTIMIZATION OF IMAGINARY VARIABLES Exploring the capabilities of neural networks to map the imaginary loss landscape and studying their applications in modern research. GRAPHCORE'S PHIL BROWN ON HOW IPUS ARE ADVANCING MACHINE Phil shares some of the approaches, like sparsity and low precision, behind the breakthrough performance of Graphcore's Intelligence Processing Units (IPUs).Products

Dashboard Sweeps

Artifacts

Reports

Resources

ML Community ArticlesAuthors

Podcast

Webinars

Community

Reading Group

Fastbook

Reproducibility

Case Studies

Tutorials

Benchmarks

Company

Careers

Enterprise

Docs Pricing

Login Sign Up

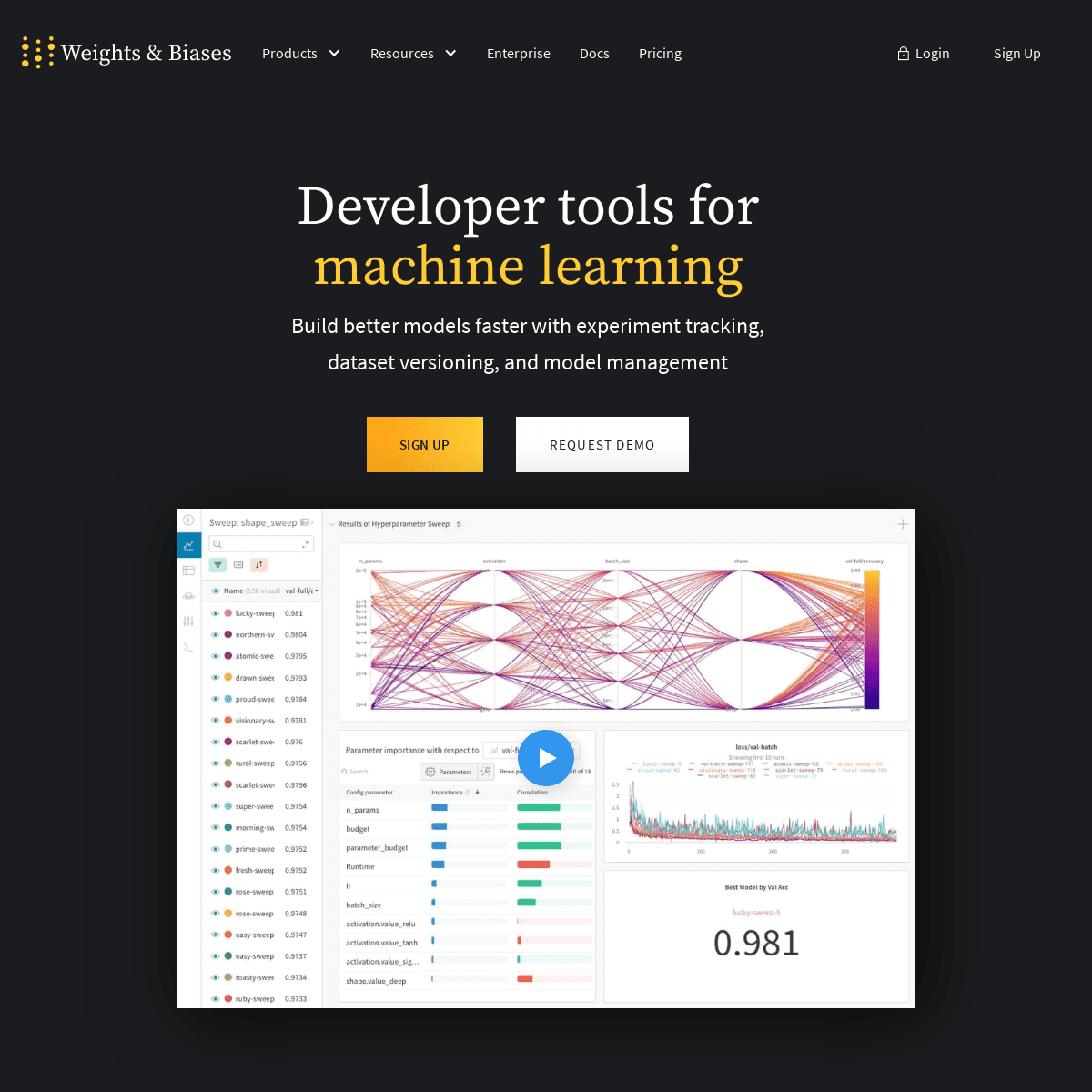

DEVELOPER TOOLS FOR MACHINE LEARNING Build better models faster with experiment tracking, dataset versioning, and model management Sign up Request demo Trusted by 100,000+ ML practitioners01

INTEGRATE QUICKLY

Track, compare, and visualize ML experiments with 5 lines of code. Free for academic and open source projects.try a live notebook

Any Framework

TensorFlow

PyTorch

Keras

Scikit

Hugging Face

XGBoost

# Flexible integration for any Python scriptimport wandb

# 1. Start a W&B run wandb.init(project='gpt3') # 2. Save model inputs and hyperparameters config = wandb.config config.learning_rate = 0.01 # Model training here # 3. Log metrics over time to visualize performance wandb.log({"loss": loss})import wandb

# 1. Start a W&B run wandb.init(project='gpt3') # 2. Save model inputs and hyperparameters config = wandb.config config.learning_rate = 0.01 # Model training here # 3. Log metrics over time to visualize performance with tf.Session() as sess:# ...

wandb.tensorflow.log(tf.summary.merge_all())import wandb

# 1. Start a new run wandb.init(project="gpt-3") # 2. Save model inputs and hyperparameters config = wandb.config config.learning_rate = 0.01 # 3. Log gradients and model parameterswandb.watch(model)

for batch_idx, (data, target) in enumerate(train_loader): if batch_idx % args.log_interval == 0: # 4. Log metrics to visualize performance wandb.log({"loss": loss})import wandb

from wandb.keras import WandbCallback # 1. Start a new run wandb.init(project="gpt-3") # 2. Save model inputs and hyperparameters config = wandb.config config.learning_rate = 0.01... Define a model

# 3. Log layer dimensions and metrics over time model.fit(X_train, y_train, validation_data=(X_test, y_test),callbacks=)

import wandb

wandb.init(project="visualize-sklearn") # Model training here # Log classifier visualizations wandb.sklearn.plot_classifier(clf, X_train, X_test, y_train, y_test, y_pred, y_probas, labels, model_name='SVC', feature_names=None) # Log regression visualizations wandb.sklearn.plot_regressor(reg, X_train, X_test, y_train, y_test,model_name='Ridge')

# Log clustering visualizations wandb.sklearn.plot_clusterer(kmeans, X_train, cluster_labels, labels=None, model_name='KMeans') # 1. Import wandb and loginimport wandb

wandb.login()

# 2. Define which wandb project to log to and name your run wandb.init(project="gpt-3", run_name='gpt-3-base-high-lr') # 3. Add wandb in your Hugging Face `TrainingArguments` args = TrainingArguments(... , report_to='wandb') # 4. W&B logging will begin automatically when your start trainingyour Trainer

trainer = Trainer(... , args=args)trainer.train()

import wandb

# 1. Start a new run wandb.init(project="visualize-models", name="xgboost") # 2. Add the callback bst = xgboost.train(param, xg_train, num_round, watchlist,callbacks=)

# Get predictions

pred = bst.predict(xg_test)02

VISUALIZE SEAMLESSLY Add W&B's lightweight integration to your existing ML code and quickly get live metrics, terminal logs, and system stats streamed to the centralized dashboard.Watch Demo

03

COLLABORATE IN REAL TIME Explain how your model works, show graphs of how model versions improved, discuss bugs, and demonstrate progress towards milestones.View Reports

DESIGNED FOR ALL USE CASES

Practitioners

Train + Evaluate + Share

Team

Scale + Collaborate

Enterprise

Manage + Reproduce + GovernDashboard

Sweeps

Artifacts

Reports

CENTRAL DASHBOARD

A SYSTEM OF RECORD FOR YOUR MODEL RESULTS Add a few lines to your script, and each time you train a new version of your model, you'll see a new experiment stream live to yourdashboard.

Try it Out

HYPERPARAMETER SWEEP TRY DOZENS OF MODEL VERSIONS QUICKLY Optimize models with our massively scalable hyperparameter search tool. Sweeps are lightweight, fast to set up, and plug in to your existing infrastructure for running models.Try it Out

ARTIFACT TRACKING

LIGHTWEIGHT MODEL AND DATASET VERSIONING Save every detail of your end-to-end machine learning pipeline — data preparation, data versioning, training, and evaluation.Try it Out

COLLABORATIVE DOCUMENTS EXPLORE RESULTS AND SHARE FINDINGS It's never been easier to share project updates. Explain how your model works, show graphs of how model versions improved, discuss bugs, and demonstrate progress towards milestones.Try it Out

Collaboration

Reproducibility

Debugging

Transparency

COLLABORATION

SEAMLESSLY SHARE PROGRESS ACROSS PROJECTS. Manage team projects with a lightweight system of record. It's easy to hand off projects when every experiment is automatically well documented and saved centrally.Try W&B

REPRODUCE RESULTS

EFFORTLESSLY CAPTURE CONFIGURATIONS With Weights & Biases experiment tracking, your team can standardize tracking for experiments and capture hyperparameters, metrics, input data, and the exact code version that trained each modeltry w&b

DEBUG ML MODELS

FOCUS YOUR TEAM ON THE HARD MACHINE LEARNING PROBLEMS Let Weights & Biases take care of the legwork of tracking and visualizing performance metrics, example predictions, and even system metrics to identify performance issues.try w&B

TRANSPARENCY

SHARE UPDATES ACROSS YOUR ORGANIZATION It's never been easier to share project updates. Explain how your model works, show graphs of how model versions improved, discuss bugs, and demonstrate progress towards milestones.try w&b

Governance

Provenance

efficiency

Security

GOVERNANCE

PROTECT AND MANAGE VALUABLE IP Use this central platform to reliably track all your organization's machine learning models, from experimentation to production. Centrally manage access controls and artifact audit logs, with a complete model history that enables traceable model results.TRY W&B

DATA PROVENANCE

RELIABLE RECORDS FOR AUDITING MODELS Capture all the inputs, transformations, and systems involved in building a production model. Safeguard valuable intellectual property with all the necessary context to understand and build upon models, even after team members leave.TRY W&B

ORGANIZATIONAL EFFICIENCY UNLOCK PRODUCTIVITY, ACCELERATE RESEARCH With a well integrated pipeline, your machine learning teams move quickly and build valuable models in less time. Use Weights & Biases to empower your team to share insights and build models faster.Try w&B

DATA SECURITY

INSTALL IN PRIVATE CLOUD AND ON-PREM Data security is a cornerstone of our machine learning platform. We support enterprise installations in private cloud and on-prem clusters, and plug in easily with other enterprise-grade tools in your machine learning workflow.try W&B

TRUSTED BY 100,000+ MACHINE LEARNING PRACTITIONERS AT 200+ COMPANIES AND RESEARCH INSTITUTIONSSee Case Study

"W&B was fundamental for launching our internal machine learning systems, as it enables collaboration across various teams."Hamel Husain

GitHub

"W&B is a key piece of our fast-paced, cutting-edge, large-scale research workflow: great flexibility, performance, and userexperience."

Adrien Gaidon

Toyota Research Institute "W&B allows us to scale up insights from a single researcher to the entire team and from a single machine to thousands."Wojciech Zaremba

Cofounder of OpenAI

ABOUT WEIGHTS & BIASES Our mission is to build the best tools for machine learning. Use W&B for experiment tracking, dataset versioning, and collaborating on MLprojects.

NEVER LOSE TRACK OF ANOTHER ML PROJECT. TRY WEIGHTS & BIASES TODAY. Sign UP Request DemoFEATURED PROJECTS

Once you’re using W&B to track and visualize ML experiments, it’s seamless to create a report to showcase your work.VIEW GALLERY

OpenAI Jukebox

01

Exploring generative models that create music based on raw audioLighting Effects

02

Use RGB-space geometry to generate digital painting lighting effects Political Advertising03

Fuzzy string search (or binary matching) on entity names from receiptPDFs

Visualize Predictions04

Visualize images, videos, audio, tables, HTML, metrics, plots, 3dobjects, and more

Semantic Segmentation Semantic segmentation for scene parsing on Berkeley Deep Drive 100KDebugging Models

06

How ML engineers at Latent Space quickly iterate on models with W&B reports receipt PDFsOpenAI Jukebox

01

Exploring generative models that create music based on raw audioLighting Effects

02

Use RGB-space geometry to generate digital painting lighting effects Political Advertising03

Fuzzy string search (or binary matching) on entity names from receiptPDFs

Visualize Predictions04

Visualize images, videos, audio, tables, HTML, metrics, plots, 3dobjects, and more

Semantic Segmentation Semantic segmentation for scene parsing on Berkeley Deep Drive 100KDebugging Models

06

How ML engineers at Latent Space quickly iterate on models with W&B reports receipt PDFsOpenAI Jukebox

01

Exploring generative models that create music based on raw audioLighting Effects

02

Use RGB-space geometry to generate digital painting lighting effects Political Advertising03

Fuzzy string search (or binary matching) on entity names from receiptPDFs

Visualize Predictions04

Visualize images, videos, audio, tables, HTML, metrics, plots, 3dobjects, and more

Semantic Segmentation Semantic segmentation for scene parsing on Berkeley Deep Drive 100KDebugging Models

06

How ML engineers at Latent Space quickly iterate on models with W&B reports receipt PDFsOpenAI Jukebox

01

Exploring generative models that create music based on raw audioLighting Effects

02

Use RGB-space geometry to generate digital painting lighting effects Political Advertising03

Fuzzy string search (or binary matching) on entity names from receiptPDFs

Visualize Predictions04

Visualize images, videos, audio, tables, HTML, metrics, plots, 3dobjects, and more

Semantic Segmentation Semantic segmentation for scene parsing on Berkeley Deep Drive 100KDebugging Models

06

How ML engineers at Latent Space quickly iterate on models with W&B reports receipt PDFs

ACCESS THE WHITE PAPER Peruse our findings on how building the right technical stack for your machine learning team support core business efforts and safeguards IP.Full Name

Email Address

Organization

Download white paper Oops! Something went wrong while submitting the form. STAY CONNECTED WITH THE ML COMMUNITY Working on machine learning projects? We're bringing together ML practitioners from across industry and academia.SLACK

Join our Slack community of over 5000 machine learning engineers Join Our Slack Channel

PODCAST

Get a behind-the-scenes look at production ML with industry leaders Listen the last episode

WEBINAR

Join virtual events and get insights on best practices for your MLprojects

Join Our Webinars

SALON

Curated discussions with practitioners about their ML projectsJoin our Salon

NEVER LOSE TRACK OF ANOTHER ML PROJECT. TRY W&B TODAY. Sign UP Request Demo ProductsDashboard SweepsArtifacts

Reports

QUICKSTARTDocumentation Example Projects RESOURCESSlack Community PodcastArticles

Tutorials

Benchmarks

W&BAbout Us Authors

Contact

Copyright © 2021 Weights & Biases. All rights reserved. Terms of Service Privacy PolicyCookie Settings

Details

Copyright © 2024 ArchiveBay.com. All rights reserved. Terms of Use | Privacy Policy | DMCA | 2021 | Feedback | Advertising | RSS 2.0