Are you over 18 and want to see adult content?

More Annotations

فروشگاه بزرگ عسل هانی مال – اولین هایپرمارکت اینترنتی تخصصی عسل

Are you over 18 and want to see adult content?

Home | Middle East Construction News

Are you over 18 and want to see adult content?

Luxury Homes for Sale | Luxury Real Estate | Luxury Portfolio

Are you over 18 and want to see adult content?

How to Make Compost at Home: Composting Instructions – A guide to making your own compost.

Are you over 18 and want to see adult content?

The Price of Weed, Marijuana, Cannabis - PriceOfWeed.com

Are you over 18 and want to see adult content?

Accueil - Dérives dans l'eglise catholiqueDérives dans l'eglise catholique

Are you over 18 and want to see adult content?

Jonnas Market | Premier Market Howell

Are you over 18 and want to see adult content?

Yargı Yayınevi | 2019 - 2020 KPSS Kitapları - ALES, DGS, YKS Kitapları

Are you over 18 and want to see adult content?

Favourite Annotations

A complete backup of https://unescoetxea.org

Are you over 18 and want to see adult content?

A complete backup of https://manowar.com

Are you over 18 and want to see adult content?

A complete backup of https://brisbaneinternational.com.au

Are you over 18 and want to see adult content?

A complete backup of https://casinosicurionline.net

Are you over 18 and want to see adult content?

A complete backup of https://brinkzone.com

Are you over 18 and want to see adult content?

A complete backup of https://manheim.com.au

Are you over 18 and want to see adult content?

A complete backup of https://plasticsurgeryhits.com

Are you over 18 and want to see adult content?

A complete backup of https://honda.com.br

Are you over 18 and want to see adult content?

A complete backup of https://familieadvokaten.dk

Are you over 18 and want to see adult content?

A complete backup of https://technogeciskontrol.com

Are you over 18 and want to see adult content?

A complete backup of https://bkkidneycare.com

Are you over 18 and want to see adult content?

A complete backup of https://havis.com

Are you over 18 and want to see adult content?

Text

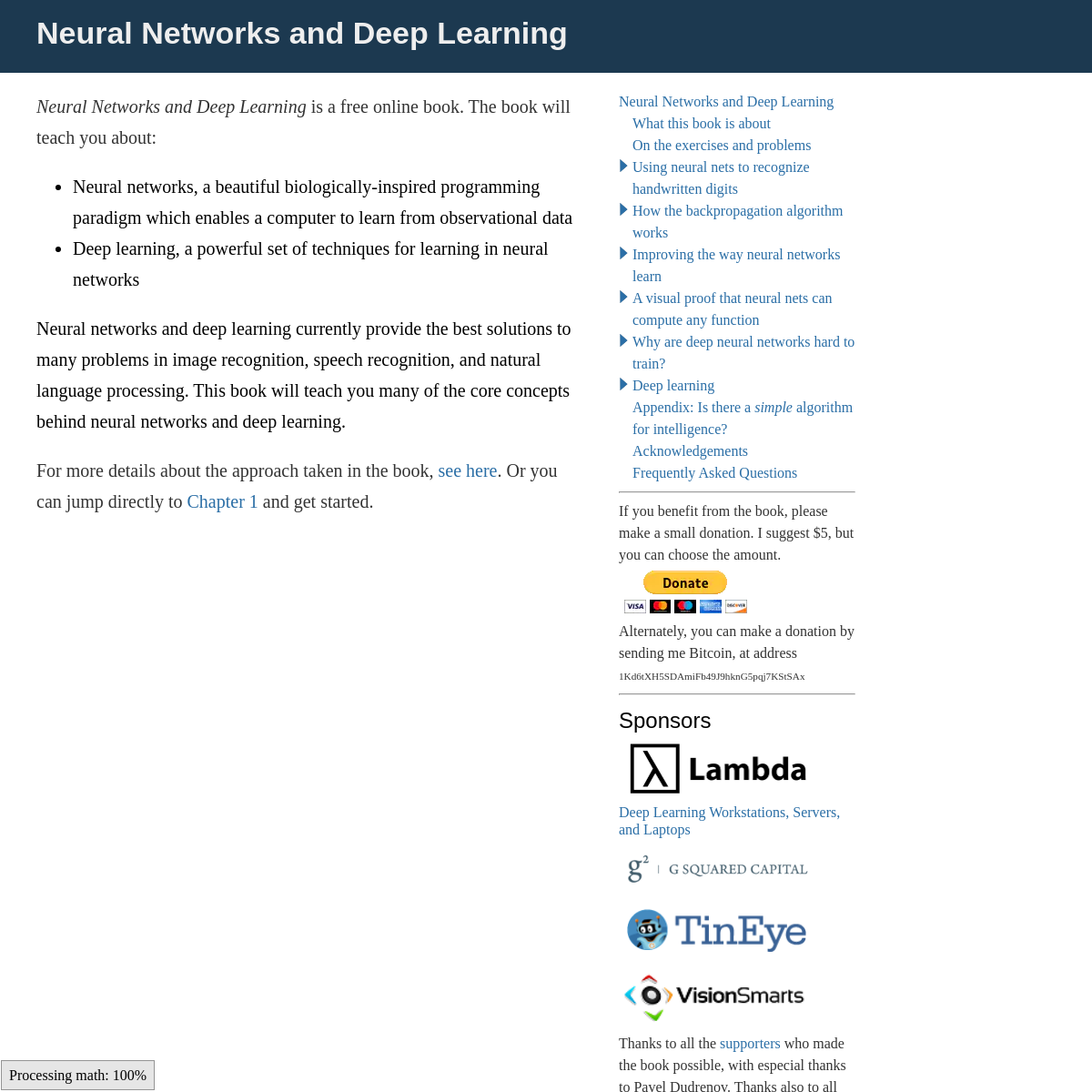

observational data

NEURAL NETWORKS AND DEEP LEARNING δ l = ( ( w l + 1) T δ l + 1) ⊙ σ ′ ( z l) . This means that δ lj is likely to get small if the neuron is near saturation. And this, in turn, means that any weights input to a saturated neuron will learn slowly* *This reasoning won't hold if wl + 1Tδl + 1 has large enough entries to compensate for the smallness of σ. NEURAL NETWORKS AND DEEP LEARNINGSEE MORE ON NEURALNETWORKSANDDEEPLEARNING.COM NEURAL NETWORKS AND DEEP LEARNING In this book, we've focused on the nuts and bolts of neural networks: how they work, and how they can be used to solve pattern recognitionproblems.

NEURAL NETWORKS AND DEEP LEARNING It's not uncommon for technical books to include an admonition from the author that readers must do the exercises and problems. I always feel a little peculiar when I read such warnings. NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. One of the most striking facts about neural networks is that they can compute any function at all. That is, suppose someone hands you some complicated, wiggly function, f(x): No matter what the function, there is guaranteed to be a neural network so that for every possible input, x, the value f(x) (or some NEURAL NETWORKS AND DEEP LEARNING The book grew out of a set of notes I prepared for an online study group on neural networks and deep learning. Many thanks to all the participants in that study group: Paul Bloore, Chris Dawson, Andrew Doherty, Ilya Grigorik, Alex Kosorukoff, Chris Olah, and Rob Spekkens. NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. When a golf player is first learning to play golf, they usually spend most of their time developing a basic swing. Only gradually do they develop other shots, learning to chip, draw and fade the ball, building on and modifyingtheir basic swing.

NEURAL NETWORKS AND DEEP LEARNING More generally, it turns out that the gradient in deep neural networks is unstable, tending to either explode or vanish in earlier layers. This instability is a fundamental problem for gradient-based learning in deep neural networks. It's something we need to understand, and, if possible, take steps to address. NEURAL NETWORKS AND DEEP LEARNING In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License.This means you're free to copy, share, and build on this book, but not to sell it. NEURAL NETWORKS AND DEEP LEARNING Neural Networks and Deep Learning is a free online book. The book will teach you about: Neural networks, a beautiful biologically-inspired programming paradigm which enables a computer to learn fromobservational data

NEURAL NETWORKS AND DEEP LEARNING δ l = ( ( w l + 1) T δ l + 1) ⊙ σ ′ ( z l) . This means that δ lj is likely to get small if the neuron is near saturation. And this, in turn, means that any weights input to a saturated neuron will learn slowly* *This reasoning won't hold if wl + 1Tδl + 1 has large enough entries to compensate for the smallness of σ. NEURAL NETWORKS AND DEEP LEARNINGSEE MORE ON NEURALNETWORKSANDDEEPLEARNING.COM NEURAL NETWORKS AND DEEP LEARNING In this book, we've focused on the nuts and bolts of neural networks: how they work, and how they can be used to solve pattern recognitionproblems.

NEURAL NETWORKS AND DEEP LEARNING It's not uncommon for technical books to include an admonition from the author that readers must do the exercises and problems. I always feel a little peculiar when I read such warnings. NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. One of the most striking facts about neural networks is that they can compute any function at all. That is, suppose someone hands you some complicated, wiggly function, f(x): No matter what the function, there is guaranteed to be a neural network so that for every possible input, x, the value f(x) (or some NEURAL NETWORKS AND DEEP LEARNING The book grew out of a set of notes I prepared for an online study group on neural networks and deep learning. Many thanks to all the participants in that study group: Paul Bloore, Chris Dawson, Andrew Doherty, Ilya Grigorik, Alex Kosorukoff, Chris Olah, and Rob Spekkens. NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. When a golf player is first learning to play golf, they usually spend most of their time developing a basic swing. Only gradually do they develop other shots, learning to chip, draw and fade the ball, building on and modifyingtheir basic swing.

NEURAL NETWORKS AND DEEP LEARNING More generally, it turns out that the gradient in deep neural networks is unstable, tending to either explode or vanish in earlier layers. This instability is a fundamental problem for gradient-based learning in deep neural networks. It's something we need to understand, and, if possible, take steps to address. NEURAL NETWORKS AND DEEP LEARNING In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License.This means you're free to copy, share, and build on this book, but not to sell it. NEURAL NETWORKS AND DEEP LEARNING I obtained a best classification accuracy of $97.80$ percent. This is the classification accuracy on the test_data, evaluated at the training epoch where we get the best classification accuracy on the validation_data.Using the validation data to decide when to evaluate the test accuracy helps avoid overfitting to the test data (see this earlier discussion of the use of validation data). NEURAL NETWORKS AND DEEP LEARNING The biases and weights in the Network object are all initialized randomly, using the Numpy np.random.randn function to generate Gaussian distributions with mean $0$ and standard deviation $1$. This random initialization gives our stochastic gradient descent algorithm a place to start from. In later chapters we'll find better ways of initializing the weights and biases, but this will do for now. NEURAL NETWORKS AND DEEP LEARNING Neural networks are one of the most beautiful programming paradigms ever invented. In the conventional approach to programming, we tell the computer what to do, breaking big problems up into many small, precisely defined tasks that the computer can easily perform. NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. What this book is about. On the exercises and problems. Using neural nets to recognize handwritten digits. Perceptrons. Sigmoid neurons. The architecture of neural networks. A simple network to classify handwritten digits. Learning with gradient descent. NEURAL NETWORKS AND DEEP LEARNING Thankyou to the following people for their help in finding and eliminating bugs and other errors in the book: Kartik Agaram, Martin Aulbach, Joseph Barrow, Maxim Baz, Ingo Blechschmidt, Charles Brauer, Greg Brockman, Cristi Burcă, Josue Ortega Caro, Gildas Chabot, Jen Dodd, Philipp Düren, Chris Ferrie, Tim Gowers, Timo Hannay, Vojtěch Havlíček, Nikola Henezi, Jay Huie, Michael Lennon NEURAL NETWORKS AND DEEP LEARNING Neural Networks and Deep Learning is a free online book. The book will teach you about: Neural networks, a beautiful biologically-inspired programming paradigm which enables a computer to learn fromobservational data

NEURAL NETWORKS AND DEEP LEARNINGSEE MORE ON NEURALNETWORKSANDDEEPLEARNING.COM NEURAL NETWORKS AND DEEP LEARNING We can see from this graph that when the neuron's output is close to $1$, the curve gets very flat, and so $\sigma'(z)$ gets very small. Equations (55) and (56) then tell us that $\partial C / \partial w$ and $\partial C / \partial b$ get very small. This is the origin of the learning slowdown. NEURAL NETWORKS AND DEEP LEARNING In this book, we've focused on the nuts and bolts of neural networks: how they work, and how they can be used to solve pattern recognitionproblems.

NEURAL NETWORKS AND DEEP LEARNING It's not uncommon for technical books to include an admonition from the author that readers must do the exercises and problems. I always feel a little peculiar when I read such warnings. NEURAL NETWORKS AND DEEP LEARNING For the most part I'm going to stick with the graphical point of view. But in what follows you may sometimes find it helpful to switch points of view, and think about things in terms of if-then-else.. We can use our bump-making trick to get two bumps, by gluing two pairs of NEURAL NETWORKS AND DEEP LEARNING In the last chapter we saw how neural networks can learn their weights and biases using the gradient descent algorithm. There was, however, a gap in our explanation: we didn't discuss how to compute the gradient of the cost function. That's quite a gap! In this chapter I'll explain a fast algorithm for computing such gradients, an algorithm known asbackpropagation.

NEURAL NETWORKS AND DEEP LEARNING I obtained a best classification accuracy of $97.80$ percent. This is the classification accuracy on the test_data, evaluated at the training epoch where we get the best classification accuracy on the validation_data.Using the validation data to decide when to evaluate the test accuracy helps avoid overfitting to the test data (see this earlier discussion of the use of validation data). NEURAL NETWORKS AND DEEP LEARNING In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License.This means you're free to copy, share, and build on this book, but not to sell it. NEURAL NETWORKS AND DEEP LEARNING Imagine you're an engineer who has been asked to design a computer from scratch. One day you're working away in your office, designing logical circuits, setting out AND gates, OR gates, and so on, when your boss walks in with bad news. The customer has just added a surprising design requirement: the circuit for the entire computer must be just two layers deep: NEURAL NETWORKS AND DEEP LEARNING Neural Networks and Deep Learning is a free online book. The book will teach you about: Neural networks, a beautiful biologically-inspired programming paradigm which enables a computer to learn fromobservational data

NEURAL NETWORKS AND DEEP LEARNINGSEE MORE ON NEURALNETWORKSANDDEEPLEARNING.COM NEURAL NETWORKS AND DEEP LEARNING We can see from this graph that when the neuron's output is close to $1$, the curve gets very flat, and so $\sigma'(z)$ gets very small. Equations (55) and (56) then tell us that $\partial C / \partial w$ and $\partial C / \partial b$ get very small. This is the origin of the learning slowdown. NEURAL NETWORKS AND DEEP LEARNING In this book, we've focused on the nuts and bolts of neural networks: how they work, and how they can be used to solve pattern recognitionproblems.

NEURAL NETWORKS AND DEEP LEARNING It's not uncommon for technical books to include an admonition from the author that readers must do the exercises and problems. I always feel a little peculiar when I read such warnings. NEURAL NETWORKS AND DEEP LEARNING For the most part I'm going to stick with the graphical point of view. But in what follows you may sometimes find it helpful to switch points of view, and think about things in terms of if-then-else.. We can use our bump-making trick to get two bumps, by gluing two pairs of NEURAL NETWORKS AND DEEP LEARNING In the last chapter we saw how neural networks can learn their weights and biases using the gradient descent algorithm. There was, however, a gap in our explanation: we didn't discuss how to compute the gradient of the cost function. That's quite a gap! In this chapter I'll explain a fast algorithm for computing such gradients, an algorithm known asbackpropagation.

NEURAL NETWORKS AND DEEP LEARNING I obtained a best classification accuracy of $97.80$ percent. This is the classification accuracy on the test_data, evaluated at the training epoch where we get the best classification accuracy on the validation_data.Using the validation data to decide when to evaluate the test accuracy helps avoid overfitting to the test data (see this earlier discussion of the use of validation data). NEURAL NETWORKS AND DEEP LEARNING In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License.This means you're free to copy, share, and build on this book, but not to sell it. NEURAL NETWORKS AND DEEP LEARNING Imagine you're an engineer who has been asked to design a computer from scratch. One day you're working away in your office, designing logical circuits, setting out AND gates, OR gates, and so on, when your boss walks in with bad news. The customer has just added a surprising design requirement: the circuit for the entire computer must be just two layers deep: NEURAL NETWORKS AND DEEP LEARNING Imagine you're an engineer who has been asked to design a computer from scratch. One day you're working away in your office, designing logical circuits, setting out AND gates, OR gates, and so on, when your boss walks in with bad news. The customer has just added a surprising design requirement: the circuit for the entire computer must be just two layers deep: NEURAL NETWORKS AND DEEP LEARNING Neural networks are one of the most beautiful programming paradigms ever invented. In the conventional approach to programming, we tell the computer what to do, breaking big problems up into many small, precisely defined tasks that the computer can easily perform. NEURAL NETWORKS AND DEEP LEARNING Thankyou to the following people for their help in finding and eliminating bugs and other errors in the book: Kartik Agaram, Martin Aulbach, Joseph Barrow, Maxim Baz, Ingo Blechschmidt, Charles Brauer, Greg Brockman, Cristi Burcă, Josue Ortega Caro, Gildas Chabot, Jen Dodd, Philipp Düren, Chris Ferrie, Tim Gowers, Timo Hannay, Vojtěch Havlíček, Nikola Henezi, Jay Huie, Michael Lennon NEURAL NETWORKS AND DEEP LEARNING Thanks to all the supporters who made the book possible, with especial thanks to Pavel Dudrenov. Thanks also to all the contributors to the Bugfinder Hall of Fame. SEQUENCE TO SEQUENCE LEARNING WITH NEURAL NETWORKS 3.1 Dataset details We used the WMT’14 English to French dataset. We trained our models on a subset of 12M sen-tences consisting of 348M French words and 304M English words, which is a clean “selected” NEURAL NETWORKS AND DEEP LEARNING Neural Networks and Deep Learning is a free online book. The book will teach you about: Neural networks, a beautiful biologically-inspired programming paradigm which enables a computer to learn fromobservational data

NEURAL NETWORKS AND DEEP LEARNINGSEE MORE ON NEURALNETWORKSANDDEEPLEARNING.COM NEURAL NETWORKS AND DEEP LEARNING We can see from this graph that when the neuron's output is close to $1$, the curve gets very flat, and so $\sigma'(z)$ gets very small. Equations (55) and (56) then tell us that $\partial C / \partial w$ and $\partial C / \partial b$ get very small. This is the origin of the learning slowdown. NEURAL NETWORKS AND DEEP LEARNING In this book, we've focused on the nuts and bolts of neural networks: how they work, and how they can be used to solve pattern recognitionproblems.

NEURAL NETWORKS AND DEEP LEARNING It's not uncommon for technical books to include an admonition from the author that readers must do the exercises and problems. I always feel a little peculiar when I read such warnings. NEURAL NETWORKS AND DEEP LEARNING For the most part I'm going to stick with the graphical point of view. But in what follows you may sometimes find it helpful to switch points of view, and think about things in terms of if-then-else.. We can use our bump-making trick to get two bumps, by gluing two pairs of NEURAL NETWORKS AND DEEP LEARNING In the last chapter we saw how neural networks can learn their weights and biases using the gradient descent algorithm. There was, however, a gap in our explanation: we didn't discuss how to compute the gradient of the cost function. That's quite a gap! In this chapter I'll explain a fast algorithm for computing such gradients, an algorithm known asbackpropagation.

NEURAL NETWORKS AND DEEP LEARNING I obtained a best classification accuracy of $97.80$ percent. This is the classification accuracy on the test_data, evaluated at the training epoch where we get the best classification accuracy on the validation_data.Using the validation data to decide when to evaluate the test accuracy helps avoid overfitting to the test data (see this earlier discussion of the use of validation data). NEURAL NETWORKS AND DEEP LEARNING In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License.This means you're free to copy, share, and build on this book, but not to sell it. NEURAL NETWORKS AND DEEP LEARNING Imagine you're an engineer who has been asked to design a computer from scratch. One day you're working away in your office, designing logical circuits, setting out AND gates, OR gates, and so on, when your boss walks in with bad news. The customer has just added a surprising design requirement: the circuit for the entire computer must be just two layers deep: NEURAL NETWORKS AND DEEP LEARNING Neural Networks and Deep Learning is a free online book. The book will teach you about: Neural networks, a beautiful biologically-inspired programming paradigm which enables a computer to learn fromobservational data

NEURAL NETWORKS AND DEEP LEARNINGSEE MORE ON NEURALNETWORKSANDDEEPLEARNING.COM NEURAL NETWORKS AND DEEP LEARNING We can see from this graph that when the neuron's output is close to $1$, the curve gets very flat, and so $\sigma'(z)$ gets very small. Equations (55) and (56) then tell us that $\partial C / \partial w$ and $\partial C / \partial b$ get very small. This is the origin of the learning slowdown. NEURAL NETWORKS AND DEEP LEARNING In this book, we've focused on the nuts and bolts of neural networks: how they work, and how they can be used to solve pattern recognitionproblems.

NEURAL NETWORKS AND DEEP LEARNING It's not uncommon for technical books to include an admonition from the author that readers must do the exercises and problems. I always feel a little peculiar when I read such warnings. NEURAL NETWORKS AND DEEP LEARNING For the most part I'm going to stick with the graphical point of view. But in what follows you may sometimes find it helpful to switch points of view, and think about things in terms of if-then-else.. We can use our bump-making trick to get two bumps, by gluing two pairs of NEURAL NETWORKS AND DEEP LEARNING In the last chapter we saw how neural networks can learn their weights and biases using the gradient descent algorithm. There was, however, a gap in our explanation: we didn't discuss how to compute the gradient of the cost function. That's quite a gap! In this chapter I'll explain a fast algorithm for computing such gradients, an algorithm known asbackpropagation.

NEURAL NETWORKS AND DEEP LEARNING I obtained a best classification accuracy of $97.80$ percent. This is the classification accuracy on the test_data, evaluated at the training epoch where we get the best classification accuracy on the validation_data.Using the validation data to decide when to evaluate the test accuracy helps avoid overfitting to the test data (see this earlier discussion of the use of validation data). NEURAL NETWORKS AND DEEP LEARNING In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License.This means you're free to copy, share, and build on this book, but not to sell it. NEURAL NETWORKS AND DEEP LEARNING Imagine you're an engineer who has been asked to design a computer from scratch. One day you're working away in your office, designing logical circuits, setting out AND gates, OR gates, and so on, when your boss walks in with bad news. The customer has just added a surprising design requirement: the circuit for the entire computer must be just two layers deep: NEURAL NETWORKS AND DEEP LEARNING Imagine you're an engineer who has been asked to design a computer from scratch. One day you're working away in your office, designing logical circuits, setting out AND gates, OR gates, and so on, when your boss walks in with bad news. The customer has just added a surprising design requirement: the circuit for the entire computer must be just two layers deep: NEURAL NETWORKS AND DEEP LEARNING Neural networks are one of the most beautiful programming paradigms ever invented. In the conventional approach to programming, we tell the computer what to do, breaking big problems up into many small, precisely defined tasks that the computer can easily perform. NEURAL NETWORKS AND DEEP LEARNING Thankyou to the following people for their help in finding and eliminating bugs and other errors in the book: Kartik Agaram, Martin Aulbach, Joseph Barrow, Maxim Baz, Ingo Blechschmidt, Charles Brauer, Greg Brockman, Cristi Burcă, Josue Ortega Caro, Gildas Chabot, Jen Dodd, Philipp Düren, Chris Ferrie, Tim Gowers, Timo Hannay, Vojtěch Havlíček, Nikola Henezi, Jay Huie, Michael Lennon SEQUENCE TO SEQUENCE LEARNING WITH NEURAL NETWORKS 3.1 Dataset details We used the WMT’14 English to French dataset. We trained our models on a subset of 12M sen-tences consisting of 348M French words and 304M English words, which is a clean “selected” NEURAL NETWORKS AND DEEP LEARNING Thanks to all the supporters who made the book possible, with especial thanks to Pavel Dudrenov. Thanks also to all the contributors to the Bugfinder Hall of Fame. NEURAL NETWORKS AND DEEP LEARNING Neural Networks and Deep Learning is a free online book. The book will teach you about: Neural networks, a beautiful biologically-inspired programming paradigm which enables a computer to learn fromobservational data

NEURAL NETWORKS AND DEEP LEARNING δ l = ( ( w l + 1) T δ l + 1) ⊙ σ ′ ( z l) . This means that δ lj is likely to get small if the neuron is near saturation. And this, in turn, means that any weights input to a saturated neuron will learn slowly* *This reasoning won't hold if wl + 1Tδl + 1 has large enough entries to compensate for the smallness of σ. NEURAL NETWORKS AND DEEP LEARNING In this book, we've focused on the nuts and bolts of neural networks: how they work, and how they can be used to solve pattern recognitionproblems.

NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. The human visual system is one of the wonders of the world. Consider the following sequence of handwritten digits: Most people effortlessly recognize those digits as 504192. That ease is deceptive. In each hemisphere of our brain, humans have a primary visual cortex, also known as V1, containing 140million

NEURAL NETWORKS AND DEEP LEARNING It's not uncommon for technical books to include an admonition from the author that readers must do the exercises and problems. I always feel a little peculiar when I read such warnings. NEURAL NETWORKS AND DEEP LEARNING The book grew out of a set of notes I prepared for an online study group on neural networks and deep learning. Many thanks to all the participants in that study group: Paul Bloore, Chris Dawson, Andrew Doherty, Ilya Grigorik, Alex Kosorukoff, Chris Olah, and Rob Spekkens. NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. One of the most striking facts about neural networks is that they can compute any function at all. That is, suppose someone hands you some complicated, wiggly function, f(x): No matter what the function, there is guaranteed to be a neural network so that for every possible input, x, the value f(x) (or some NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. When a golf player is first learning to play golf, they usually spend most of their time developing a basic swing. Only gradually do they develop other shots, learning to chip, draw and fade the ball, building on and modifyingtheir basic swing.

NEURAL NETWORKS AND DEEP LEARNING More generally, it turns out that the gradient in deep neural networks is unstable, tending to either explode or vanish in earlier layers. This instability is a fundamental problem for gradient-based learning in deep neural networks. It's something we need to understand, and, if possible, take steps to address. NEURAL NETWORKS AND DEEP LEARNING In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License.This means you're free to copy, share, and build on this book, but not to sell it. NEURAL NETWORKS AND DEEP LEARNING Neural Networks and Deep Learning is a free online book. The book will teach you about: Neural networks, a beautiful biologically-inspired programming paradigm which enables a computer to learn fromobservational data

NEURAL NETWORKS AND DEEP LEARNING δ l = ( ( w l + 1) T δ l + 1) ⊙ σ ′ ( z l) . This means that δ lj is likely to get small if the neuron is near saturation. And this, in turn, means that any weights input to a saturated neuron will learn slowly* *This reasoning won't hold if wl + 1Tδl + 1 has large enough entries to compensate for the smallness of σ. NEURAL NETWORKS AND DEEP LEARNING In this book, we've focused on the nuts and bolts of neural networks: how they work, and how they can be used to solve pattern recognitionproblems.

NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. The human visual system is one of the wonders of the world. Consider the following sequence of handwritten digits: Most people effortlessly recognize those digits as 504192. That ease is deceptive. In each hemisphere of our brain, humans have a primary visual cortex, also known as V1, containing 140million

NEURAL NETWORKS AND DEEP LEARNING It's not uncommon for technical books to include an admonition from the author that readers must do the exercises and problems. I always feel a little peculiar when I read such warnings. NEURAL NETWORKS AND DEEP LEARNING The book grew out of a set of notes I prepared for an online study group on neural networks and deep learning. Many thanks to all the participants in that study group: Paul Bloore, Chris Dawson, Andrew Doherty, Ilya Grigorik, Alex Kosorukoff, Chris Olah, and Rob Spekkens. NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. One of the most striking facts about neural networks is that they can compute any function at all. That is, suppose someone hands you some complicated, wiggly function, f(x): No matter what the function, there is guaranteed to be a neural network so that for every possible input, x, the value f(x) (or some NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. When a golf player is first learning to play golf, they usually spend most of their time developing a basic swing. Only gradually do they develop other shots, learning to chip, draw and fade the ball, building on and modifyingtheir basic swing.

NEURAL NETWORKS AND DEEP LEARNING More generally, it turns out that the gradient in deep neural networks is unstable, tending to either explode or vanish in earlier layers. This instability is a fundamental problem for gradient-based learning in deep neural networks. It's something we need to understand, and, if possible, take steps to address. NEURAL NETWORKS AND DEEP LEARNING In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License.This means you're free to copy, share, and build on this book, but not to sell it. NEURAL NETWORKS AND DEEP LEARNING Neural networks and deep learning. The human visual system is one of the wonders of the world. Consider the following sequence of handwritten digits: Most people effortlessly recognize those digits as 504192. That ease is deceptive. In each hemisphere of our brain, humans have a primary visual cortex, also known as V1, containing 140million

NEURAL NETWORKS AND DEEP LEARNING More generally, it turns out that the gradient in deep neural networks is unstable, tending to either explode or vanish in earlier layers. This instability is a fundamental problem for gradient-based learning in deep neural networks. It's something we need to understand, and, if possible, take steps to address. NEURAL NETWORKS AND DEEP LEARNING And we'll speculate about the future of neural networks and deep learning, ranging from ideas like intention-driven user interfaces, to the role of deep learning in artificial intelligence. The chapter builds on the earlier chapters in the book, making use of and integrating ideas such as backpropagation, regularization, the softmaxfunction

NEURAL NETWORKS AND DEEP LEARNING Neural networks are one of the most beautiful programming paradigms ever invented. In the conventional approach to programming, we tell the computer what to do, breaking big problems up into many small, precisely defined tasks that the computer can easily perform. NEURAL NETWORKS AND DEEP LEARNING Thankyou to the following people for their help in finding and eliminating bugs and other errors in the book: Kartik Agaram, Martin Aulbach, Joseph Barrow, Maxim Baz, Ingo Blechschmidt, Charles Brauer, Greg Brockman, Cristi Burcă, Josue Ortega Caro, Gildas Chabot, Jen Dodd, Philipp Düren, Chris Ferrie, Tim Gowers, Timo Hannay, Vojtěch Havlíček, Nikola Henezi, Jay Huie, Michael Lennon NEURAL NETWORKS AND DEEP LEARNING Neural Networks and Deep Learning What this book is about On the exercises and problems Using neural nets to recognize handwritten digits* Perceptrons

* Sigmoid neurons

* The architecture of neural networks * A simple network to classify handwritten digits * Learning with gradient descent * Implementing our network to classify digits * Toward deep learning How the backpropagation algorithm works * Warm up: a fast matrix-based approach to computing the output froma neural network

* The two assumptions we need about the cost function * The Hadamard product, s⊙ts⊙t * The four fundamental equations behind backpropagation * Proof of the four fundamental equations (optional) * The backpropagation algorithm * The code for backpropagation * In what sense is backpropagation a fast algorithm? * Backpropagation: the big picture Improving the way neural networks learn * The cross-entropy cost function * Overfitting and regularization * Weight initialization * Handwriting recognition revisited: the code * How to choose a neural network's hyper-parameters?* Other techniques

A visual proof that neural nets can compute any function* Two caveats

* Universality with one input and one output * Many input variables * Extension beyond sigmoid neurons * Fixing up the step functions* Conclusion

Why are deep neural networks hard to train? * The vanishing gradient problem * What's causing the vanishing gradient problem? Unstable gradientsin deep neural nets

* Unstable gradients in more complex networks * Other obstacles to deep learningDeep learning

* Introducing convolutional networks * Convolutional neural networks in practice * The code for our convolutional networks * Recent progress in image recognition * Other approaches to deep neural nets * On the future of neural networks Appendix: Is there a _simple_ algorithm for intelligence?Acknowledgements

Frequently Asked Questions ------------------------- If you benefit from the book, please make a small donation. I suggest $5, but you can choose the amount. Alternately, you can make a donation by sending me Bitcoin, at address 1Kd6tXH5SDAmiFb49J9hknG5pqj7KStSAx -------------------------Sponsors

Deep Learning Workstations, Servers, and Laptops Thanks to all the supporters who made the book possible, with especial thanks to Pavel Dudrenov. Thanks also to all the contributors to the Bugfinder Hall of Fame . -------------------------Resources

Michael Nielsen on TwitterBook FAQ

Code repository

Michael Nielsen's project announcement mailing list Deep Learning , book by Ian Goodfellow, Yoshua Bengio, and Aaron Courvillecognitivemedium.com

------------------------- By Michael Nielsen / Dec 2019 _Neural Networks and Deep Learning_ is a free online book. The book will teach you about: * Neural networks, a beautiful biologically-inspired programming paradigm which enables a computer to learn from observational data * Deep learning, a powerful set of techniques for learning in neuralnetworks

Neural networks and deep learning currently provide the best solutions to many problems in image recognition, speech recognition, and natural language processing. This book will teach you many of the core concepts behind neural networks and deep learning. For more details about the approach taken in the book, see here . Or you can jump directly to Chapter 1 andget started.

In academic work, please cite this book as: Michael A. Nielsen, "Neural Networks and Deep Learning", Determination Press, 2015 This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 Unported License. This means

you're free to copy, share, and build on this book, but not to sell it. If you're interested in commercial use, please contact me. Last update: Thu Dec 26 15:26:33 2019Details

Copyright © 2024 ArchiveBay.com. All rights reserved. Terms of Use | Privacy Policy | DMCA | 2021 | Feedback | Advertising | RSS 2.0