Are you over 18 and want to see adult content?

More Annotations

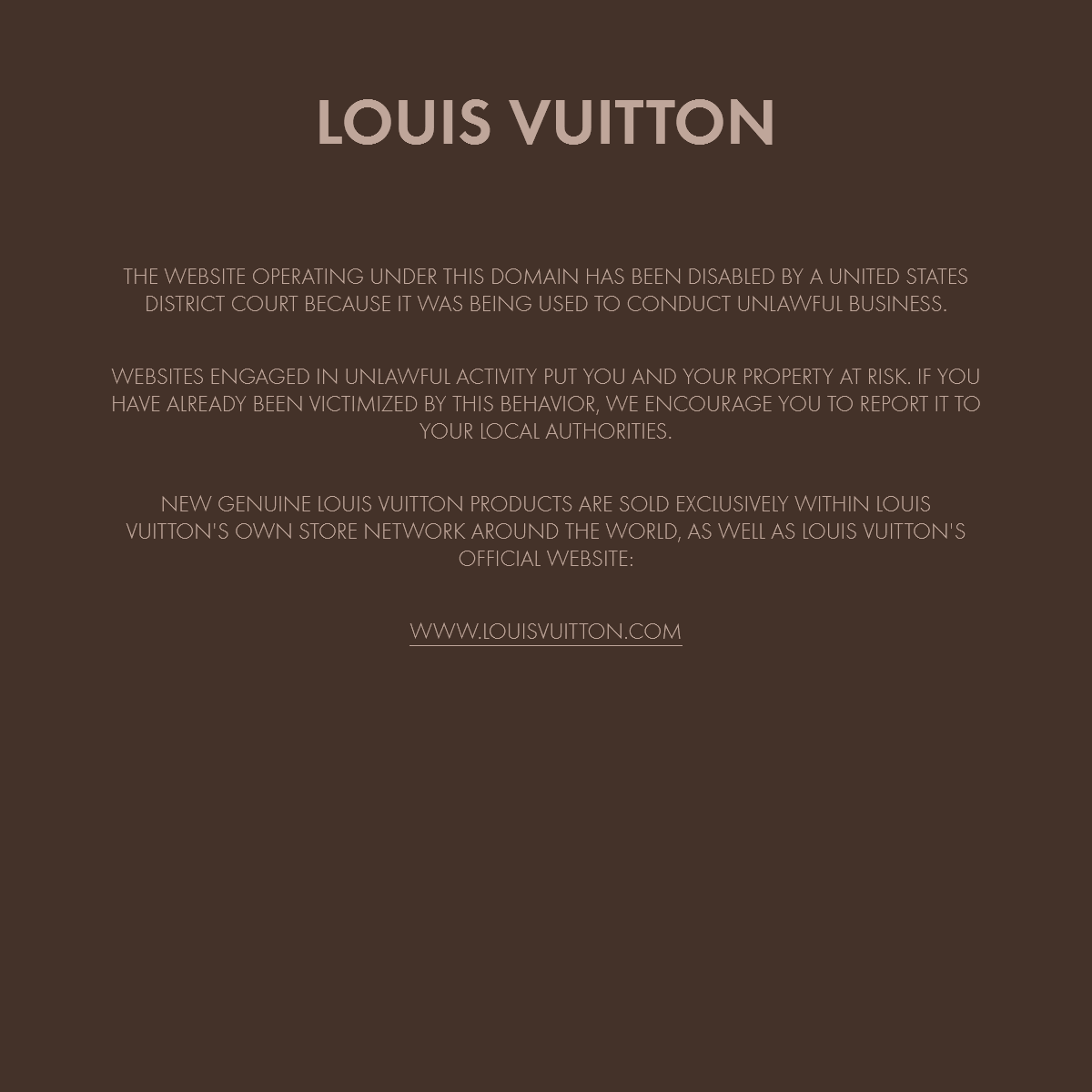

A complete backup of louisvuittondiaperbag.org

Are you over 18 and want to see adult content?

A complete backup of cherokeewholehealth.com

Are you over 18 and want to see adult content?

A complete backup of ukdissertation.co.uk

Are you over 18 and want to see adult content?

A complete backup of stephencaver.com

Are you over 18 and want to see adult content?

A complete backup of cheapwigshops.com

Are you over 18 and want to see adult content?

Favourite Annotations

A complete backup of custom-cheap-jersey.com

Are you over 18 and want to see adult content?

A complete backup of application-filing-service.com

Are you over 18 and want to see adult content?

A complete backup of surfsnowdonia.com

Are you over 18 and want to see adult content?

A complete backup of bitcoingrowthfund.com

Are you over 18 and want to see adult content?

Text

to

APACHE MXNET BLOG

谁才是最强的视频动作识别算法? 22 Dec 2020 · 朱毅 Amazon Applied Scientist . 2020短视频继续爆发式发展,对视频理解的需求也继续猛增。不论是搞科研,还是项目落地,如何选择一个合适自己应用场景的视频模型至关重要。 神经网络推理加速之模型量化 JSALT 2019 MONTRÉAL: DIVE INTO DEEP LEARNING FOR NATURAL JSALT 2019 Montréal: Dive into Deep Learning for Natural Language Processing Time: Friday, June 14, 2019 Location: Ecole de Technology Superieure in Montréal, Canada . 为什么开设 APACHE MXNET 博客TRANSLATE THIS PAGE 半年前我们开始了一个实验性质的项目:通过代码实现来从0开始学深度学习。因为我们认为深度学习是一门动手的学科,只有通过亲手实现和实验才能体会到各个细节是如何影响最终结果,从而可以应用深度学习来解决实际问题。DEEP LEARNING

Deep Learning - The Straight Dope ===== This repo contains an incremental sequence of notebooks designed to teach deep learning, `Apache MXNet (incubating) `_, and the gluon interface. Our goal is to leverage the strengths of Jupyter notebooks to present prose, graphics, equations, and MXBOARD — 助力 MXNET 数据可视化TRANSLATE THIS PAGE写在前面.

深度神经网络自出现以来就一直饱受争议。从实践角度来讲,设计并训练出一个可用的模型非常困难,需要涉及大量的调参、修改网络结构、尝试各种优化算法等等;从理论角度来看,深度神经网络的数学理论证明并不完备,从而造成人们对其基本原理缺乏清晰的认识。 APACHE MXNET 中文资源 •TRANSLATE THIS PAGE Apache MXNet 中文资源. Apache MXNet 最早源自一群有激情的中国学生。. 之后它在全世界广泛使用,中文用户一直是 MXNet 最重要的用户群。. MXNet 社区正在努力增加更多的中文资源,方便中文用户阅读和讨论。. 这里我们列出目前主要的中文资源:. 十分钟从 PYTORCH 转 MXNETTRANSLATE THIS PAGE 多维矩阵 . 对于多维矩阵,PyTorch 沿用了 Torch 的风格称之为 tensor,MXNet 则追随了 NumPy 的称呼 ndarray。下面我们创建一个二维矩阵,其中每个元素初始化成1。

KDD19: FROM SHALLOW TO DEEP LANGUAGE REPRESENTATIONS: PRE KDD19 Tutorial: From Shallow to Deep Language Representations: Pre-training, Fine-tuning, and Beyond Time: Thu, August 08, 2019 - 9:30am - 12:30 pm | 1:00 pm - 4:00 pm VARIATIONAL AUTOENCODERS WITH GLUON Variational Autoencoders with Gluon. Recent progress in variation autoencoders (VAE) has made it one of the most popular frameworks for building deep generative models. In this notebook, we will first introduce the necessary background. Then, we proceed to build a VAE model based on the paper Auto-Encoding Variational Bayes and apply itto

APACHE MXNET BLOG

谁才是最强的视频动作识别算法? 22 Dec 2020 · 朱毅 Amazon Applied Scientist . 2020短视频继续爆发式发展,对视频理解的需求也继续猛增。不论是搞科研,还是项目落地,如何选择一个合适自己应用场景的视频模型至关重要。 神经网络推理加速之模型量化 JSALT 2019 MONTRÉAL: DIVE INTO DEEP LEARNING FOR NATURAL JSALT 2019 Montréal: Dive into Deep Learning for Natural Language Processing Time: Friday, June 14, 2019 Location: Ecole de Technology Superieure in Montréal, Canada . 为什么开设 APACHE MXNET 博客TRANSLATE THIS PAGE 半年前我们开始了一个实验性质的项目:通过代码实现来从0开始学深度学习。因为我们认为深度学习是一门动手的学科,只有通过亲手实现和实验才能体会到各个细节是如何影响最终结果,从而可以应用深度学习来解决实际问题。DEEP LEARNING

Deep Learning - The Straight Dope ===== This repo contains an incremental sequence of notebooks designed to teach deep learning, `Apache MXNet (incubating) `_, and the gluon interface. Our goal is to leverage the strengths of Jupyter notebooks to present prose, graphics, equations, and MXBOARD — 助力 MXNET 数据可视化TRANSLATE THIS PAGE写在前面.

深度神经网络自出现以来就一直饱受争议。从实践角度来讲,设计并训练出一个可用的模型非常困难,需要涉及大量的调参、修改网络结构、尝试各种优化算法等等;从理论角度来看,深度神经网络的数学理论证明并不完备,从而造成人们对其基本原理缺乏清晰的认识。 APACHE MXNET 中文资源 •TRANSLATE THIS PAGE Apache MXNet 中文资源. Apache MXNet 最早源自一群有激情的中国学生。. 之后它在全世界广泛使用,中文用户一直是 MXNet 最重要的用户群。. MXNet 社区正在努力增加更多的中文资源,方便中文用户阅读和讨论。. 这里我们列出目前主要的中文资源:. 十分钟从 PYTORCH 转 MXNETTRANSLATE THIS PAGE 多维矩阵 . 对于多维矩阵,PyTorch 沿用了 Torch 的风格称之为 tensor,MXNet 则追随了 NumPy 的称呼 ndarray。下面我们创建一个二维矩阵,其中每个元素初始化成1。

DEEP LEARNING

Deep Learning - The Straight Dope¶. This repo contains an incremental sequence of notebooks designed to teach deep learning, Apache MXNet (incubating), and the gluon interface.Our goal is to leverage the strengths of Jupyter notebooks to present prose, graphics, equations, and code together in one place.SERIALIZATION

The practice of saving models is sometimes called checkpointing and it’s especially important for a number of reasons. 1. We can preserve and syndicate models that are trained once. 2. Some models perform best (as determined on validation data) at some epoch in themiddle of training.

CONVOLUTIONAL NEURAL NETWORKS FROM SCRATCH Convolutional neural networks incorporate convolutional layers. These layers associate each of their nodes with a small window, called a receptive field, in the previous layer, instead of connecting to the full layer. This allows us to first learn local features via transformations that are applied in the same way for the top rightcorner as

BINARY CLASSIFICATION WITH LOGISTIC REGRESSION Binary classification with logistic regression¶. Over the last two tutorials we worked through how to implement a linear regression model, both from scratch and using Gluon to automate most of the repetitive work like allocating and initializing parameters, defining loss functions, and implementing optimizers.. Regression is the hammer we reach for when we want to answer how much? or how many BATCH NORMALIZATION IN GLUON Batch Normalization in gluon ¶. In the preceding section, we implemented batch normalization ourselves using NDArray and autograd. As with most commonly used neural network layers, Gluon has batch normalization predefined, so this section is going to bestraightforward.

BATCH NORMALIZATION FROM SCRATCH The basic idea is doing the normalization then applying a linear scale and shift to the mini-batch: For input mini-batch B = { x 1,, m }, we want to learn the parameter γ and β. The output of the layer is { y i = B N γ, β ( x i) }, where: formulas taken from Ioffe, Sergey, and Christian Szegedy. “Batch normalization: Accelerating deep EXPONENTIAL SMOOTHING AND INNOVATION STATE SPACE MODEL Innovation state space model (ISSM) is an example of such models with considerable flexibility in respresnting commonly occurring time series patterns and underlie the exponential smoothing methods. The idea behind ISSMs is to maintain a latent state vector l t with recent information about level, trend, and seasonality factors. LONG SHORT-TERM MEMORY (LSTM) RNNS Long short-term memory (LSTM) RNNs ¶. An LSTM block has mechanisms to enable “memorizing” information for an extended number of time steps. We use the LSTM block with the following transformations that map inputs to outputs across blocks at consecutive layers and consecutive time steps: where ⊙ is an element-wise multiplicationoperator

TREE LSTM MODELING FOR SEMANTIC RELATEDNESS In this case, we would like to build the grammatical tree structure of the sentences into the architecture of an LSTM recurrent neural network. This tutorial walks through tree LSTMs, an approach that does precisely that. The models here are based on the tree-structured LSTM by Kai Sheng Tai, Richard Socher, and Chris Manning. 预知未来——GLUON时间序列工具包(GLUONTS)TRANSLATETHIS PAGE

你可以将以上指标与其他模型或是你的预测应用的业务要求作比较。例如,我们可以用Seasonal Naive Method进行预测,然后与以上结果比较。 SeasonalNaive

Method假设数据会有一个固定的周期性(本例中,2016个数据点是一周,作为一个周期),并通过基于周期性来复制之前观察到的训练数据进行预测。 KDD19: FROM SHALLOW TO DEEP LANGUAGE REPRESENTATIONS: PRE KDD19 Tutorial: From Shallow to Deep Language Representations: Pre-training, Fine-tuning, and Beyond Time: Thu, August 08, 2019 - 9:30am - 12:30 pm | 1:00 pm - 4:00 pm VARIATIONAL AUTOENCODERS WITH GLUON Variational Autoencoders with Gluon. Recent progress in variation autoencoders (VAE) has made it one of the most popular frameworks for building deep generative models. In this notebook, we will first introduce the necessary background. Then, we proceed to build a VAE model based on the paper Auto-Encoding Variational Bayes and apply itto

APACHE MXNET BLOG

谁才是最强的视频动作识别算法? 22 Dec 2020 · 朱毅 Amazon Applied Scientist . 2020短视频继续爆发式发展,对视频理解的需求也继续猛增。不论是搞科研,还是项目落地,如何选择一个合适自己应用场景的视频模型至关重要。 为什么开设 APACHE MXNET 博客TRANSLATE THIS PAGE 半年前我们开始了一个实验性质的项目:通过代码实现来从0开始学深度学习。因为我们认为深度学习是一门动手的学科,只有通过亲手实现和实验才能体会到各个细节是如何影响最终结果,从而可以应用深度学习来解决实际问题。 MXBOARD — 助力 MXNET 数据可视化TRANSLATE THIS PAGE写在前面.

深度神经网络自出现以来就一直饱受争议。从实践角度来讲,设计并训练出一个可用的模型非常困难,需要涉及大量的调参、修改网络结构、尝试各种优化算法等等;从理论角度来看,深度神经网络的数学理论证明并不完备,从而造成人们对其基本原理缺乏清晰的认识。 APACHE MXNET 中文资源 •TRANSLATE THIS PAGE Apache MXNet 中文资源. Apache MXNet 最早源自一群有激情的中国学生。. 之后它在全世界广泛使用,中文用户一直是 MXNet 最重要的用户群。. MXNet 社区正在努力增加更多的中文资源,方便中文用户阅读和讨论。. 这里我们列出目前主要的中文资源:. GLUONNLP V0.6:让可复现的BERT模型走到你身边TRANSLATE THISPAGE

复现 bert

自然语言理解任务的微调结果,有脚本有日志有真相.gluonnlp

的目标是?消灭无法复现的研究结果!我们提供了可复现rte 、mnli 、sst-2、mrpc 、squad 1.1 和 squad 2.0 最先进结果的训练脚本和训练日志。 并且我们模块化的代码可以在同一框架内将bert应用到各类任务上。DEEP LEARNING

Deep Learning - The Straight Dope ===== This repo contains an incremental sequence of notebooks designed to teach deep learning, `Apache MXNet (incubating) `_, and the gluon interface. Our goal is to leverage the strengths of Jupyter notebooks to present prose, graphics, equations, and 十分钟从 PYTORCH 转 MXNETTRANSLATE THIS PAGE 多维矩阵 . 对于多维矩阵,PyTorch 沿用了 Torch 的风格称之为 tensor,MXNet 则追随了 NumPy 的称呼 ndarray。下面我们创建一个二维矩阵,其中每个元素初始化成1。

MXNET GLUON 上实现跨卡同步 BATCH NORMALIZATIONTRANSLATE THISPAGE

跨卡同步 BN

的关键是在前向运算的时候拿到全局的均值 \ \mu\)和方差 \

(\sigma\),在后向运算时候得到相应的全局梯度。. 最简单的实现方法是先同步求均值,再发回各卡然后同步求方差,但是这样就同步了两次。. 实际上只需要同步一次就可以,. 我们使用了一个 KDD19: FROM SHALLOW TO DEEP LANGUAGE REPRESENTATIONS: PRE KDD19 Tutorial: From Shallow to Deep Language Representations: Pre-training, Fine-tuning, and Beyond Time: Thu, August 08, 2019 - 9:30am - 12:30 pm | 1:00 pm - 4:00 pm VARIATIONAL AUTOENCODERS WITH GLUON Variational Autoencoders with Gluon. Recent progress in variation autoencoders (VAE) has made it one of the most popular frameworks for building deep generative models. In this notebook, we will first introduce the necessary background. Then, we proceed to build a VAE model based on the paper Auto-Encoding Variational Bayes and apply itto

APACHE MXNET BLOG

谁才是最强的视频动作识别算法? 22 Dec 2020 · 朱毅 Amazon Applied Scientist . 2020短视频继续爆发式发展,对视频理解的需求也继续猛增。不论是搞科研,还是项目落地,如何选择一个合适自己应用场景的视频模型至关重要。 为什么开设 APACHE MXNET 博客TRANSLATE THIS PAGE 半年前我们开始了一个实验性质的项目:通过代码实现来从0开始学深度学习。因为我们认为深度学习是一门动手的学科,只有通过亲手实现和实验才能体会到各个细节是如何影响最终结果,从而可以应用深度学习来解决实际问题。 MXBOARD — 助力 MXNET 数据可视化TRANSLATE THIS PAGE写在前面.

深度神经网络自出现以来就一直饱受争议。从实践角度来讲,设计并训练出一个可用的模型非常困难,需要涉及大量的调参、修改网络结构、尝试各种优化算法等等;从理论角度来看,深度神经网络的数学理论证明并不完备,从而造成人们对其基本原理缺乏清晰的认识。 APACHE MXNET 中文资源 •TRANSLATE THIS PAGE Apache MXNet 中文资源. Apache MXNet 最早源自一群有激情的中国学生。. 之后它在全世界广泛使用,中文用户一直是 MXNet 最重要的用户群。. MXNet 社区正在努力增加更多的中文资源,方便中文用户阅读和讨论。. 这里我们列出目前主要的中文资源:. GLUONNLP V0.6:让可复现的BERT模型走到你身边TRANSLATE THISPAGE

复现 bert

自然语言理解任务的微调结果,有脚本有日志有真相.gluonnlp

的目标是?消灭无法复现的研究结果!我们提供了可复现rte 、mnli 、sst-2、mrpc 、squad 1.1 和 squad 2.0 最先进结果的训练脚本和训练日志。 并且我们模块化的代码可以在同一框架内将bert应用到各类任务上。DEEP LEARNING

Deep Learning - The Straight Dope ===== This repo contains an incremental sequence of notebooks designed to teach deep learning, `Apache MXNet (incubating) `_, and the gluon interface. Our goal is to leverage the strengths of Jupyter notebooks to present prose, graphics, equations, and 十分钟从 PYTORCH 转 MXNETTRANSLATE THIS PAGE 多维矩阵 . 对于多维矩阵,PyTorch 沿用了 Torch 的风格称之为 tensor,MXNet 则追随了 NumPy 的称呼 ndarray。下面我们创建一个二维矩阵,其中每个元素初始化成1。

MXNET GLUON 上实现跨卡同步 BATCH NORMALIZATIONTRANSLATE THISPAGE

跨卡同步 BN

的关键是在前向运算的时候拿到全局的均值 \ \mu\)和方差 \

(\sigma\),在后向运算时候得到相应的全局梯度。. 最简单的实现方法是先同步求均值,再发回各卡然后同步求方差,但是这样就同步了两次。. 实际上只需要同步一次就可以,. 我们使用了一个 ICCV 2019 TUTORIAL: EVERYTHING YOU NEED TO KNOW TO ICCV 2019 Tutorial: Everything You Need to Know to Reproduce SOTA Deep Learning Models Time: Sunday, October 27, 2019. Half Day - AM (0800-1215) Location: Auditorium, COEX Convention Center Presenter: Zhi Zhang, Sam Skalicky, Muhyun Kim, Jiyang Kang 神经网络推理加速之模型量化 神经网络推理加速之模型量化. 1. 引言. 在深度学习中,推理是指将一个预先训练好的神经网络模型部署到实际业务场景中,如图像分类、物体检测、在线翻译等。. 由于推理直接面向用户,因此推理性能至关重要,尤其对于企业级产品而言更是如此。.衡量推理

APACHE MXNET 中文资源 •TRANSLATE THIS PAGE Apache MXNet 中文资源. Apache MXNet 最早源自一群有激情的中国学生。. 之后它在全世界广泛使用,中文用户一直是 MXNet 最重要的用户群。. MXNet 社区正在努力增加更多的中文资源,方便中文用户阅读和讨论。. 这里我们列出目前主要的中文资源:. 关于 - ZH.MXNET.IOTRANSLATE THIS PAGE 关于 Apache MXNet 博客. 这是一个由 Apache MXNet 社区小伙伴维护的博客。在这里我们讨论前沿的深度学习研究和应用。DEEP LEARNING

Deep Learning - The Straight Dope ===== This repo contains an incremental sequence of notebooks designed to teach deep learning, `Apache MXNet (incubating) `_, and the gluon interface. Our goal is to leverage the strengths of Jupyter notebooks to present prose, graphics, equations, and GLUONCV 0.8 助力街景分析 GluonCV 0.8 助力街景分析. 街景分析是计算机视觉应用最广泛的一个领域之一,越来越多的项目正在围绕街景展开,比如 生成一个交互式的虚拟城市 , 建造一个属于自己的无人车 等等。. 最近, OpenBot 项目的推出大大降低了小机器人成本。. 一部旧的智能手机加上 如何发表一篇文� 正文. 每个章节使用二级章节 ##,因为一级章节留给了标题。. 使用图片。我们可以将图片保存在 img/ 下,或者引用网络地址。 Kramdown 支持图片缩放,但并没有特别方便的办法来添加题注。 用 INTEL MKL-DNN 加速 CPU 上的深度学� 注意一点是如果之前安装了其他版本,最好先卸载,例如 pip uninstall mxnet,或者使用虚拟环境安装新版本。. 当然用户也可以自己手动编译 MXNet。编译带 MKL-DNN 的 MXNet,用户需要用 安装教程 里的命令来安装 MXNet 需要的软件包。 MKL-DNN 编译依赖 cmake,所以用户要额外安装 cmake。 GLUONCV复现WASSERSTEIN GAN 简介. 本篇博客将讨论GluonCV 0.3版本中新加入了Wasserstein GAN,包含了Wgan的一些实现细节和我踩到的一些坑。.Wgan介绍.

Wgan是在Dcgan的基础上,使用wasserstein距离替代KL距离。 使用wasserstein距离的好处是,即使当两个概率分布是没有重合的时候,同样能衡量出一个距离,而这种情况下,dcgan中使用的KL距离 实战阿里天池竞赛——服饰属性标签识别TRANSLATE THISPAGE

实战阿里天池竞赛——服饰属性标签识别. 近期阿里巴巴的天池算法竞赛平台上线了有着高额奖金的 FashionAI 全球挑战赛—服饰属性标签识别 。. 第一名队伍将得到高达 50 万人民币的奖金!. 是不是非常动心?. 反正我是动心了,可能有很多小伙伴也动心了 KDD19: FROM SHALLOW TO DEEP LANGUAGE REPRESENTATIONS: PRE KDD19 Tutorial: From Shallow to Deep Language Representations: Pre-training, Fine-tuning, and Beyond Time: Thu, August 08, 2019 - 9:30am - 12:30 pm | 1:00 pm - 4:00 pm ICCV 2019 TUTORIAL: EVERYTHING YOU NEED TO KNOW TOICCV 2019 TUTORIALSOTA MACHINE LEARNINGPAPERS WITH CODE SOTASOTA MLSOTA MODEL ML ICCV 2019 Tutorial: Everything You Need to Know to Reproduce SOTA Deep Learning Models. Time: Sunday, October 27, 2019. Half Day - AM (0800-1215) Location: Auditorium, COEX Convention Center.APACHE MXNET BLOG

谁才是最强的视频动作识别算法? 22 Dec 2020 · 朱毅 Amazon Applied Scientist . 2020短视频继续爆发式发展,对视频理解的需求也继续猛增。不论是搞科研,还是项目落地,如何选择一个合适自己应用场景的视频模型至关重要。 JSALT 2019 MONTRÉAL: DIVE INTO DEEP LEARNING FOR NATURAL JSALT 2019 Montréal: Dive into Deep Learning for Natural Language Processing Time: Friday, June 14, 2019 Location: Ecole de Technology Superieure in Montréal, Canada . 为什么开设 APACHE MXNET 博客TRANSLATE THIS PAGE 半年前我们开始了一个实验性质的项目:通过代码实现来从0开始学深度学习。因为我们认为深度学习是一门动手的学科,只有通过亲手实现和实验才能体会到各个细节是如何影响最终结果,从而可以应用深度学习来解决实际问题。 MXBOARD — 助力 MXNET 数据可视化TRANSLATE THIS PAGE写在前面.

深度神经网络自出现以来就一直饱受争议。从实践角度来讲,设计并训练出一个可用的模型非常困难,需要涉及大量的调参、修改网络结构、尝试各种优化算法等等;从理论角度来看,深度神经网络的数学理论证明并不完备,从而造成人们对其基本原理缺乏清晰的认识。DEEP LEARNING

Deep Learning - The Straight Dope ===== This repo contains an incremental sequence of notebooks designed to teach deep learning, `Apache MXNet (incubating) `_, and the gluon interface. Our goal is to leverage the strengths of Jupyter notebooks to present prose, graphics, equations, and 十分钟从 PYTORCH 转 MXNETTRANSLATE THIS PAGE 多维矩阵 . 对于多维矩阵,PyTorch 沿用了 Torch 的风格称之为 tensor,MXNet 则追随了 NumPy 的称呼 ndarray。下面我们创建一个二维矩阵,其中每个元素初始化成1。

GLUONCV 0.3: 超越经典 如何发表一篇文� 正文. 每个章节使用二级章节 ##,因为一级章节留给了标题。. 使用图片。我们可以将图片保存在 img/ 下,或者引用网络地址。 Kramdown 支持图片缩放,但并没有特别方便的办法来添加题注。 KDD19: FROM SHALLOW TO DEEP LANGUAGE REPRESENTATIONS: PRE KDD19 Tutorial: From Shallow to Deep Language Representations: Pre-training, Fine-tuning, and Beyond Time: Thu, August 08, 2019 - 9:30am - 12:30 pm | 1:00 pm - 4:00 pm ICCV 2019 TUTORIAL: EVERYTHING YOU NEED TO KNOW TOICCV 2019 TUTORIALSOTA MACHINE LEARNINGPAPERS WITH CODE SOTASOTA MLSOTA MODEL ML ICCV 2019 Tutorial: Everything You Need to Know to Reproduce SOTA Deep Learning Models. Time: Sunday, October 27, 2019. Half Day - AM (0800-1215) Location: Auditorium, COEX Convention Center.APACHE MXNET BLOG

谁才是最强的视频动作识别算法? 22 Dec 2020 · 朱毅 Amazon Applied Scientist . 2020短视频继续爆发式发展,对视频理解的需求也继续猛增。不论是搞科研,还是项目落地,如何选择一个合适自己应用场景的视频模型至关重要。 JSALT 2019 MONTRÉAL: DIVE INTO DEEP LEARNING FOR NATURAL JSALT 2019 Montréal: Dive into Deep Learning for Natural Language Processing Time: Friday, June 14, 2019 Location: Ecole de Technology Superieure in Montréal, Canada . 为什么开设 APACHE MXNET 博客TRANSLATE THIS PAGE 半年前我们开始了一个实验性质的项目:通过代码实现来从0开始学深度学习。因为我们认为深度学习是一门动手的学科,只有通过亲手实现和实验才能体会到各个细节是如何影响最终结果,从而可以应用深度学习来解决实际问题。 MXBOARD — 助力 MXNET 数据可视化TRANSLATE THIS PAGE写在前面.

深度神经网络自出现以来就一直饱受争议。从实践角度来讲,设计并训练出一个可用的模型非常困难,需要涉及大量的调参、修改网络结构、尝试各种优化算法等等;从理论角度来看,深度神经网络的数学理论证明并不完备,从而造成人们对其基本原理缺乏清晰的认识。DEEP LEARNING

Deep Learning - The Straight Dope ===== This repo contains an incremental sequence of notebooks designed to teach deep learning, `Apache MXNet (incubating) `_, and the gluon interface. Our goal is to leverage the strengths of Jupyter notebooks to present prose, graphics, equations, and 十分钟从 PYTORCH 转 MXNETTRANSLATE THIS PAGE 多维矩阵 . 对于多维矩阵,PyTorch 沿用了 Torch 的风格称之为 tensor,MXNet 则追随了 NumPy 的称呼 ndarray。下面我们创建一个二维矩阵,其中每个元素初始化成1。

GLUONCV 0.3: 超越经典 如何发表一篇文� 正文. 每个章节使用二级章节 ##,因为一级章节留给了标题。. 使用图片。我们可以将图片保存在 img/ 下,或者引用网络地址。 Kramdown 支持图片缩放,但并没有特别方便的办法来添加题注。APACHE MXNET BLOG

谁才是最强的视频动作识别算法? 22 Dec 2020 · 朱毅 Amazon Applied Scientist . 2020短视频继续爆发式发展,对视频理解的需求也继续猛增。不论是搞科研,还是项目落地,如何选择一个合适自己应用场景的视频模型至关重要。 关于 - ZH.MXNET.IOTRANSLATE THIS PAGE 关于 Apache MXNet 博客. 这是一个由 Apache MXNet 社区小伙伴维护的博客。在这里我们讨论前沿的深度学习研究和应用。 神经网络推理加速之模型量化 神经网络推理加速之模型量化. 1. 引言. 在深度学习中,推理是指将一个预先训练好的神经网络模型部署到实际业务场景中,如图像分类、物体检测、在线翻译等。. 由于推理直接面向用户,因此推理性能至关重要,尤其对于企业级产品而言更是如此。.衡量推理

APACHE MXNET 中文资源 •TRANSLATE THIS PAGE Apache MXNet 中文资源. Apache MXNet 最早源自一群有激情的中国学生。. 之后它在全世界广泛使用,中文用户一直是 MXNet 最重要的用户群。. MXNet 社区正在努力增加更多的中文资源,方便中文用户阅读和讨论。. 这里我们列出目前主要的中文资源:. GLUONCV 0.3: 超越经典 GluonCV 0.3: 超越经典. 半年前我们开始了 GluonCV 项目,希望提供一个可靠的可以重复各个论文结果的深度学习计算机视觉库。. 过去几个月里小伙伴们挖掘了大量论文和实现中的隐藏细节,并对模型训练的各个细节进行了大量的实验。. 我们兴奋地发现,我们不仅仅 GLUONCV 0.8 助力街景分析 GluonCV 0.8 助力街景分析. 街景分析是计算机视觉应用最广泛的一个领域之一,越来越多的项目正在围绕街景展开,比如 生成一个交互式的虚拟城市 , 建造一个属于自己的无人车 等等。. 最近, OpenBot 项目的推出大大降低了小机器人成本。. 一部旧的智能手机加上 如何发表一篇文� 正文. 每个章节使用二级章节 ##,因为一级章节留给了标题。. 使用图片。我们可以将图片保存在 img/ 下,或者引用网络地址。 Kramdown 支持图片缩放,但并没有特别方便的办法来添加题注。 用 INTEL MKL-DNN 加速 CPU 上的深度学� 注意一点是如果之前安装了其他版本,最好先卸载,例如 pip uninstall mxnet,或者使用虚拟环境安装新版本。. 当然用户也可以自己手动编译 MXNet。编译带 MKL-DNN 的 MXNet,用户需要用 安装教程 里的命令来安装 MXNet 需要的软件包。 MKL-DNN 编译依赖 cmake,所以用户要额外安装 cmake。 GLUONCV复现WASSERSTEIN GAN 简介. 本篇博客将讨论GluonCV 0.3版本中新加入了Wasserstein GAN,包含了Wgan的一些实现细节和我踩到的一些坑。.Wgan介绍.

Wgan是在Dcgan的基础上,使用wasserstein距离替代KL距离。 使用wasserstein距离的好处是,即使当两个概率分布是没有重合的时候,同样能衡量出一个距离,而这种情况下,dcgan中使用的KL距离 MXNET GLUON 上实现跨卡同步 BATCH NORMALIZATIONTRANSLATE THISPAGE

跨卡同步 BN

的关键是在前向运算的时候拿到全局的均值 \ \mu\)和方差 \

(\sigma\),在后向运算时候得到相应的全局梯度。. 最简单的实现方法是先同步求均值,再发回各卡然后同步求方差,但是这样就同步了两次。. 实际上只需要同步一次就可以,. 我们使用了一个master

* master

* 1.6

* 1.5.0

* 1.4.1

* 1.3.1

* 1.2.1

* 1.1.0

* 1.0.0

* 0.12.1

* 0.11.0

x

* master

* 1.6

* 1.5.0

* 1.4.1

* 1.3.1

* 1.2.1

* 1.1.0

* 1.0.0

* 0.12.1

* 0.11.0

Get Started Blog Features Ecosystem Docs & Tutorials GitHubmaster

master 1.6 1.5.0 1.4.11.3.1 1.2.1

1.1.0 1.0.0 0.12.10.11.0

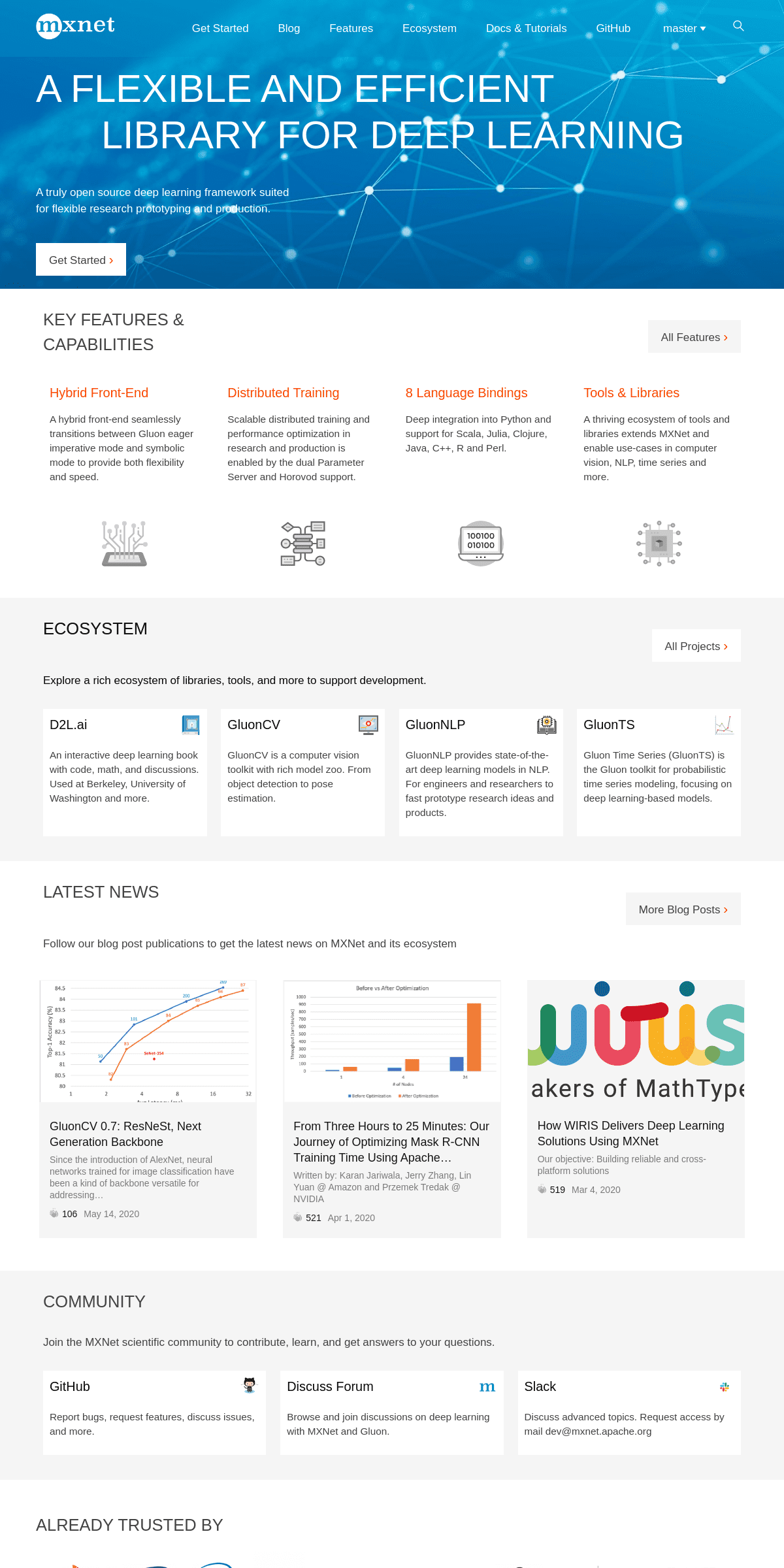

A FLEXIBLE AND EFFICIENT LIBRARY FOR DEEP LEARNING A truly open source deep learning framework suited for flexible research prototyping and production.Get Started ›

KEY FEATURES &

CAPABILITIES

All Features ›

HYBRID FRONT-END

A hybrid front-end seamlessly transitions between Gluon eager imperative mode and symbolic mode to provide both flexibility andspeed.

DISTRIBUTED TRAINING Scalable distributed training and performance optimization in research and production is enabled by the dual Parameter Server and Horovodsupport.

8 LANGUAGE BINDINGS

Deep integration into Python and support for Scala, Julia, Clojure, Java, C++, R and Perl.TOOLS & LIBRARIES

A thriving ecosystem of tools and libraries extends MXNet and enable use-cases in computer vision, NLP, time series and more.ECOSYSTEM

All Projects ›

Explore a rich ecosystem of libraries, tools, and more to supportdevelopment.

D2L.AI

An interactive deep learning book with code, math, and discussions. Used at Berkeley, University of Washington and more.GLUONCV

GluonCV is a computer vision toolkit with rich model zoo. From object detection to pose estimation.GLUONNLP

GluonNLP provides state-of-the-art deep learning models in NLP. For engineers and researchers to fast prototype research ideas andproducts.

GLUONTS

Gluon Time Series (GluonTS) is the Gluon toolkit for probabilistic time series modeling, focusing on deep learning-based models.LATEST NEWS

More Blog Posts ›

Follow our blog post publications to get the latest news on MXNet andits ecosystem

GluonCV 0.7: ResNeSt, Next Generation Backbone Since the introduction of AlexNet, neural networks trained for image classification have been a kind of backbone versatile foraddressing…

106

May 14, 2020

From Three Hours to 25 Minutes: Our Journey of Optimizing Mask R-CNN Training Time Using Apache… Written by: Karan Jariwala, Jerry Zhang, Lin Yuan @ Amazon and PrzemekTredak @ NVIDIA

521

Apr 1, 2020

How WIRIS Delivers Deep Learning Solutions Using MXNet Our objective: Building reliable and cross-platform solutions519

Mar 4, 2020

COMMUNITY

Join the MXNet scientific community to contribute, learn, and get answers to your questions.GITHUB

Report bugs, request features, discuss issues, and more.DISCUSS FORUM

Browse and join discussions on deep learning with MXNet and Gluon.SLACK

Discuss advanced topics. Request access by mail dev@mxnet.apache.orgALREADY TRUSTED BY

RESOURCES

* Mailing lists

* Developer Wiki

* Jira Tracker

* Github Roadmap

* MXNet Discuss forum * Contribute To MXNet * apache/incubator-mxnet* apachemxnet

* apachemxnet

A flexible and efficient library for deep learning. Apache MXNet is an effort undergoing incubation at The Apache Software Foundation (ASF), sponsored by the _Apache Incubator_. Incubation is required of all newly accepted projects until a further review indicates that the infrastructure, communications, and decision making process have stabilized in a manner consistent with other successful ASF projects. While incubation status is not necessarily a reflection of the completeness or stability of the code, it does indicate that the project has yet to be fully endorsed by the ASF. "Copyright © 2017-2018, The Apache Software Foundation Apache MXNet, MXNet, Apache, the Apache feather, and the Apache MXNet project logo are either registered trademarks or trademarks of the Apache SoftwareFoundation."

Details

Copyright © 2024 ArchiveBay.com. All rights reserved. Terms of Use | Privacy Policy | DMCA | 2021 | Feedback | Advertising | RSS 2.0